We’ve entered an extremely exciting time for PC gaming, with promising new memory technologies, die shrinks and increasingly powerful processors heralding in unparalleled graphics. But, the biggest buzz this year isn’t from a piece of hardware, it’s instead from an API – Microsoft’s DirectX 12.

Much has been made of DirectX 12’s multi-threaded improvements, reduced driver overhead, better usage of compute functionality and all manner of other features which will make games better, but there’s one aspect which hasn’t been so well documented – DX12’s Explicit Multiadapter.

AMD’s Head of Technical Marketing, Robert Hallock, has put together a blog post detailing how DX12 will handle multiple graphics cards in your system. We’ve previously discuss some of these details in our DX12 Build 2015 analysis, but Robert has since clarified a few points.

The history of putting two graphics cards in your systems in hopes of increasing performance is a long one – with 3DFX making the practice rather popular in the late 90’s with their Voodoo 2 graphics cards. But until now, DirectX hasn’t had specific features or tools to support multi-GPU setups. The responsibility instead has fallen on either AMD or Nvidia to do their part with either their CrossFire or SLI technologies, working with developers to implement profiles for games so the hardware can render using multiple GPU’s – including even integrated, weaker GPU’s such as those found in APUs.

Let’s be clear – we’re not saying DX11 prevents it – but it doesn’t support it as well as it should. Instead, software uses AFR (Alternate Frame Rendering) to help push the pixels. Unfortunately, AFR isn’t without its own set of drawbacks. The below Square Enix Witcher Chapter 0 DirectX 12 demo leverages the power of DirectX 12’s multi-GPU to render a staggering 63 million polygons, all beautifully lit, textures, aliased and gorgeous.

Firstly, the GPU’s need to ideally be of the same type – so for example, you’d SLI a pair of GTX 970’s, or a pair of R9 390’s of the same type. You can certainly try your hand at putting a R9 290 with an R9 290X, but the faster card will ‘downclock’ – switching to the lower frequencies and shader count of the ‘lesser’ card to keep the workload balanced between the two GPU’s.

DirectX 11’s AFR also has another problem – latency. This has been improved – but let’s say you’ve two GPU’s in your machine – we’ll call them GPU A and GPU B for ease. GPU A will render even frames, while GPU B will render even frames. Essentially, the GPU uses buffers to store these frames of animation to show them as and when; unfortunately this can lead to stutter and mouse lag – something gamer’s actively try to avoid (particularly in competitive or fast paced games).

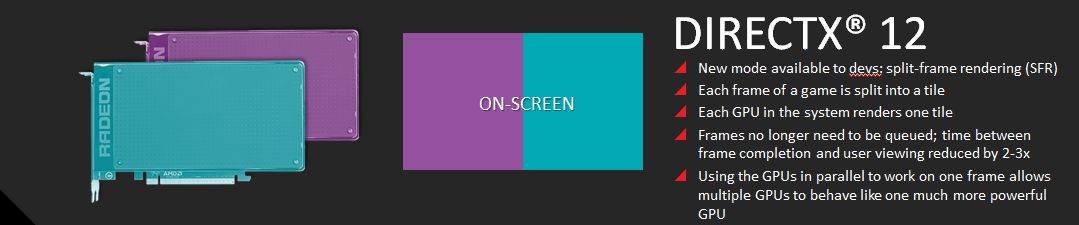

Split-Frame Rendering (known as Linked GPU’s) is new to DirectX 12 and is fairly simple in execution. To the application (okay, let’s be honest here… game) it’s effectively ‘seeing’ one GPU. SFR breaks each frame of animation into multiple smaller deals and simply assigns these ‘titles’ to the GPU.

In essence, each card has it’s own rendering queue, and its own local resources (so in other words, it’ll store things such as it’s own texture or geometry data). As discuss in build 2015, these two pools of information aren’t held in isolation, with either card ready and able to share it’s information to the other if its needed. It can do this via either a dedicated bridge between the two devices, or more conventional means such as the PCIe bus.

Asymmetric Multi-GPU (known as Unlinked GPU’s) is perhaps the more interesting, if more complex piece of technology. This technology allows the game engine to assign a workload and task that’s appropriate for the level of performance of both cards. Consider – for a moment, that many gamer’s already have a second GPU built right into their systems which is currently sitting idle. The iGP (Integrated Graphics Processor) on many Intel CPU’s and AMD APU’s with DirectX 11 is currently wasted die size, with the discrete GPU (say a Radeon R9 290 or a GTX 970) taking over the duties, the IGP is effectively shutdown.

Now, with DirectX 12’s Asymmetric Multi-GPU, this is no longer the case: instead, the weaker GPU can be tasked for physics or lighting. During Microsoft’s Build 2015 DX12 conference, the team used an Intel processor to handle the post processing of the scene after the 3d card (an Nvidia GeForce) finished rendering the bulk of the scene – such as the 3d Geometry and performing complex calculations such as realistic lighting and shadows. Now the Intel processor could add blurs, perform anti-aliasing or perhaps warping if you’re going to use it for a virtual reality display. To do this, DX12 tells the dedicated GPU to copy finished’ frame buffer from its own memory, and the system them writes it across to the other card.

You’ll also notice this allows GPU’s from multi-vendors and completely different configurations to work – unfortunately there’s still been little discussion from AMD, Nvidia or Microsoft if you’ll be able to put in a GeForce and Radeon in the same system… likely due to the fierce competition between the two developers – but even so, it’s looking increasingly likely. Frame rates during the build conference when using the IGP, and just as importantly frame latency seemed a non issue. Remember, ‘average’ and ‘minimum’ frame rates only tell half the story. For an extreme example – If a single GPU configuration has an average frame rate of 64 FPS, a max of 72 and a min of 55, that’s considerably better than a twin GPU configuration having an average of 69, a max of 81 and a min of 42.

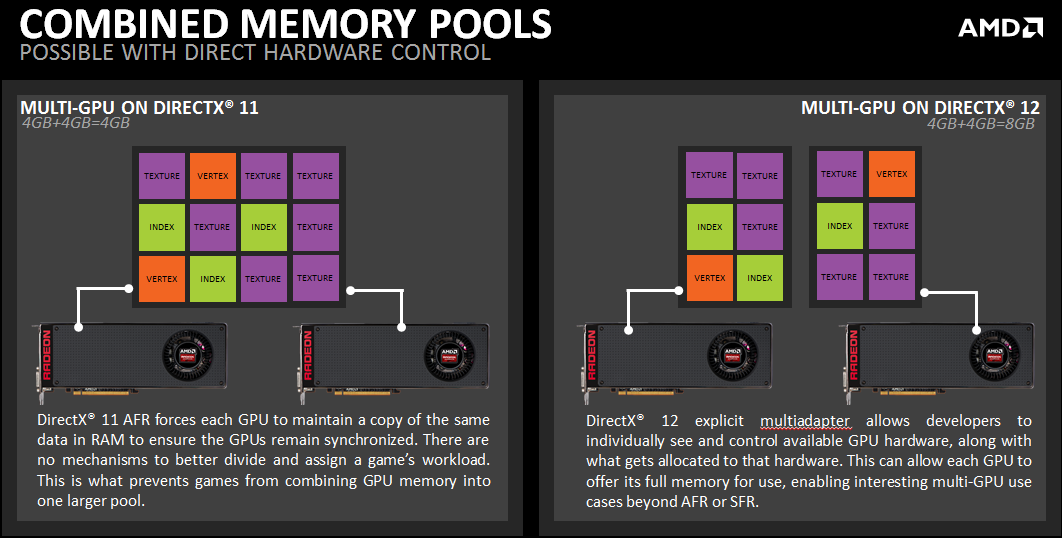

Finally – let’s talk about VRAM. As you’ll probably know, discrete graphics cards come equipped with their own dedicated onboard memory, with medium to high end cards using GDDR5 (but High Bandwidth Memory is slowly entering the fray) and lower performing video cards loaded with slower GDDR3 RAM. With DirectX 11’s AFR, each GPU most have an identical copy of data instead of its own unique data. This means that having two graphics cards running in either CrossFire or SLI doesn’t double VRAM, instead the system has effectively access to the same ‘amount’ as it would with a single card.

So two GTX 970’s equipped with 4GB VRAM doesn’t give you 8GB, instead because the data must be identical you’re still looking at only 4GB total… very important to remember for dual cards such as the Radeon R9 295X2 or the GTX 690.

DirectX 12 switches things around – as we touched on earlier on this article, each GPU has its own resident data, meaning the amount of RAM available for the game engine increases, though from what Microsoft and developers have said, it’s probably not quite doubling the RAM. Using the GTX 970 example again, because ‘some’ data per scene will likely need to be mirrored (such as certain texture data, perhaps some geometry or maybe even just driver info) the game won’t have a ‘full’ 8GB to use, but even if the number (as an example) turns out to be 6 or 7 GB (likely depending on optimization, the game and other factors) it’s a significant improvement over AFR. Considering 4K displays are already cheap enough to enter the mainstream, and we’re seeing a lot of 144hz 1440P monitors, and let’s not forget about the extreme demands of Virtual Reality, this is only a good thing, eh?

Naturally, not all developers will develop games which properly leverage DirectX 12’s multi-GPU features, and for those who’re creating less demanding titles (such as 2d indie games such as Limbo) DirectX 11 isn’t going to disappear anytime soon. But DirectX 12’s future is looking fairly certain, able to leverage a massive number of CPU threads, lower driver overhead, increased number of draw calls, better compute functionality, and exciting technology such as Execute Indirect, which allows GPU’s to issue their own commands, reducing CPU and PCIe overheads.

Hold on to your butts, it’ll be an exciting next couple of years!

Checkout AMD’s Community Post