Since the announcement of DirectX 12 at last years GDC, Microsoft have slowly drip fed developers, gamer’s and the press details of the upcoming API; but at Build 2015, we’ve news that the API is not only largely complete, but will ship with several incredible new features likely to change how we view PC gaming.

We’ll firstly get a broader overview of how DX12 is shaping up, how developers are porting their code and engines and also discuss a few features. Later in the article we’ll go over multi-gpu rendering and technical demos. If you’ve already got a very solid understanding of DirectX 12’s benefits and development, then you might be better to scroll down to the multi GPU stuff.

Lead of DirectX development, Max McCullen took the stage, eager to unveil what Microsoft and their partners have been working on the past several months. He proudly announced the API is largely complete, and the SDK and API is virtually in its retail form – possibly aside a few flags (at a basic level, think functions) which might be adjusted for compatibility.

The drivers are now working great for the three major vendors (AMD, Intel and Nvidia), with bugs mostly eliminated and great performance. He also said now is a great time to start developing DX12 applications: according to Steam’s February hardware survey, about 50% of gamer’s have DX12 compatible hardware and the number is set to rapidly rise over the coming months – up to an estimated 67% by Holiday 2015.

He also points out that developers are flocking to DirectX 12, possibly faster than even D3D9 (until now, it had enjoyed the fastest adoption rate of any Microsoft API). Microsoft are enticing developers with robust tool chains and tight integration with Visual Studio and powerful debugging tools. These tools won’t simply allow you to figure out what’s causing a crash, but also able to view specific parts of codes or assets and understand how different iterations will affect both GPU and CPU performance.

Microsoft’s strategies of switching to a Universal Windows Platform are largely documented, and DirectX 12 is on the forefront of this shift. The developers behind King of Wushu, Snail Games, testified they’d easily ported their Xbox One code over to the PC in less than six weeks, and within that short space of time the game was not just running, but with considerable improvements over DirectX 11.

Frame rate gains were relatively modest at only ten percent, but one must remember they’ve barely begun optimization. With just two engineers working on the projects and six weeks, the code wasn’t just working on DX12, but sported considerably higher GPU use versus DX11 (about ten percent higher) and a larger number of enemies, 1.5x the number of DX11 on screen at once.

They were using parallel command list, multiple CPU threads and also the lower per CPU core overhead. In short, not only were they pushing the GPU data with multiple CPU cores, which is of course something we’ve discussed multiple times by now, but because those threads require substantially lower overhead, performance goes through the roof.

This overhead and draw call advantage can be seen during our API overhead testing – disabling all but one CPU core on DX12 outpaces DX11 with all CPU cores and hyper threading enabled. Actually, outpaces doesn’t do it justice, DX12 with one CPU core achieves 4x the draw calls of DX11 with all CPU cores available. With all cores available, an R9 290X ran with 17x the number of Draw Calls.

This also leads into Pipeline State Objects, where the different stages of the graphics pipeline are treated very differently compared to that of DX11. In D3D11, everything was fine grained, and essentially each ‘state’ was a orthogonal object (orthogonal in programming means if it can be used without consideration as to how its use will affect something else), so for instance: the assembler state (which reads index and vertex data), pixel shader and rasterizers, to name a few.

This approach doesn’t gel well with modern hardware as modern graphics cards have a tendency to want to merge certain tasks or handle them in a different way to what D3D11 is comfortable with. This means that the driver cannot resolve things until states are final, leading to greater overhead and thus, fewer Draw Calls.

DirectX 12 attempts to fix this by using PSO (Pipeline State Objects), where they are issued on a separated thread and compiled in real time. The whole graphics pipeline is unified and therefore the driver and hardware convert the PSO into whatever state is required.

Square Enix’s Ivan Gavrenkov weighed in on the API, and said he believes that “greater control over the gpu” is essentially the greatest benefit of Microsoft’s updated API. Game or application developers are better able to control how the GPU’s resources are allocated, and while it’s certainly possible to leave everything driver controlled, if you want to jump in and control certain resource bindings yourself, you’re more than welcome.

You’re still able to treat everything very similar to DX11 (that is 128 textures and 16 buffers) but you’ll likely be forced to cache everything and manage data yourself (particularly a problem with high quality assets and limited resources). So a solution is instead to bind fewer resources for that specific task and enjoy better performance. It’s a juggling game – and no doubt as developers become comfortable with DX12 and the profiling tools, they’ll get better in turn at figuring out how to squeeze the best results out of hardware.

For reference, a “resource” is a collection of data (so that could be textures, or other such information) and of course binding simply means you’re specifying the source of the data. This ties in with the memory management of DirectX 12; where developers allocate heaps of memory for various resources on the GPU. If they find they’re running out of memory on the GPU and unable to load in new assets they can simply swap that data from the GPU and back into the systems main memory. In the case of a typical PC, it would come from the Graphics Cards RAM, through the PCIe connection and then back into the slower (but more abundant) DDR3 RAM.

Ivan then pointed out that because of the lower Draw Call cost games will naturally become richer, with a greater number of lights which are able to cast shadows on the scene, increased draw distance (since the engine can handle more objects on screen) and a greater number of unique, non cloned objects. Part of this is because the GPU is now able to issue its own commands, several at the same time in fact – Execute Indirect.

Mr. Gavrenkov also highlights that a new API was sorely needed to take advantage of the newer GPU features. Despite GPU’s growing in performance by magnitudes every year, CPU speeds haven’t matched up and an antiquated API just isn’t able to use modern GPU’s to their fullest potential. Khronos Group, who’re responsible for the development and maintenance of the Vulkan API highlighted similar concerns, and it’s likely Vulkan will be D3D12’s greatest rival in the marketplace.

Multiadapter D3D12 – Multiple GPU’s In DirectX 12

DirectX 12 changes things significantly over the old guard, allowing it to target multiple GPU’s (including better leveraging multi discrete GPU’s, or mixing an integrated GPU with a discrete GPU) and offering large performance boosts. It’s possible for developers to use multiple sets of threads to feed these GPU’s and they share independent memory allocation. That is to say they can each be allocated their own assets, based on what is being asked of them, but they can also share the same piece of information if the developers choose too by setting up a special memory space.

To this end, multiple GPU configurations can be handled two ways inside DirectX 12, and it will be curious how developers opt to utilize the functionality. It’s clear that should these techniques prove to be popular and supported among game developers, it will provide extremely compelling reasons for users to consider pursuing multiple GPU setups, particularly if high resolution or VR gaming are their goals.

Linked GPU to the end application look like a single GPU, but developers may create their own individual queues (for rendering and other functions) for each individual processor. Furthermore, each GPU can have its own local resources and assets, but it is possible for a card to access and read memory from another card. So, let’s assume you’ve a two card setup – GPU A could access the data that’s resident in GPU B’s memory either over the PCIe bus, or via a dedicated bridge or connection between those two cards.

Unlinked GPU’s meanwhile are a little different as each GPU can have it’s own individual rendering queue. This allows you to have different GPU configurations perform a variety of different tasks. For example, you could have the discrete GPU (let’s say a GeForce GTX 980) perform the main bulk of the graphics rendering, but then send off each complete frame to the iGP (Integrated Graphics, which could be part of an intel CPU, for instance) to have it perform either post processing or it could perform the warping necessary for Virtual Reality displays.

Unlinked GPU’s meanwhile are a little different as each GPU can have it’s own individual rendering queue. This allows you to have different GPU configurations perform a variety of different tasks. For example, you could have the discrete GPU (let’s say a GeForce GTX 980) perform the main bulk of the graphics rendering, but then send off each complete frame to the iGP (Integrated Graphics, which could be part of an intel CPU, for instance) to have it perform either post processing or it could perform the warping necessary for Virtual Reality displays.

The key when using graphics cards of mismatched performance is to decide what tasks to assign the weaker card, or you will find that the IGP will slow down performance and negate the benefit. This is one of the reasons Microsoft, for their demo, opted to use Post Processing, because the workload is very similar on a frame by frame basis and the amount of data passed over is also very similar.

To demonstrate, Max and the D3D team took Unreal Engine 4’s source code, and programmed a special code path for both the integrated and dedicated GPU (A titan X) and then ‘raced’ the engine with just the Discrete GPU and also the Discrete and Integrated. Rather than a ‘frame rate’ it was instead the first test to render 635 frames of animation. The winner, unsurprisingly was the Multi-Adapter configuration.

In the above image, you can see the timeline of how this works – Nvidia’s GPU renders the majority of the 3d work, then the data is copied over to Intel’s GPU to finish off. Despite the large mismatch of performance, both frame rate climbed by four FPS, and frame time went down about 3ms.

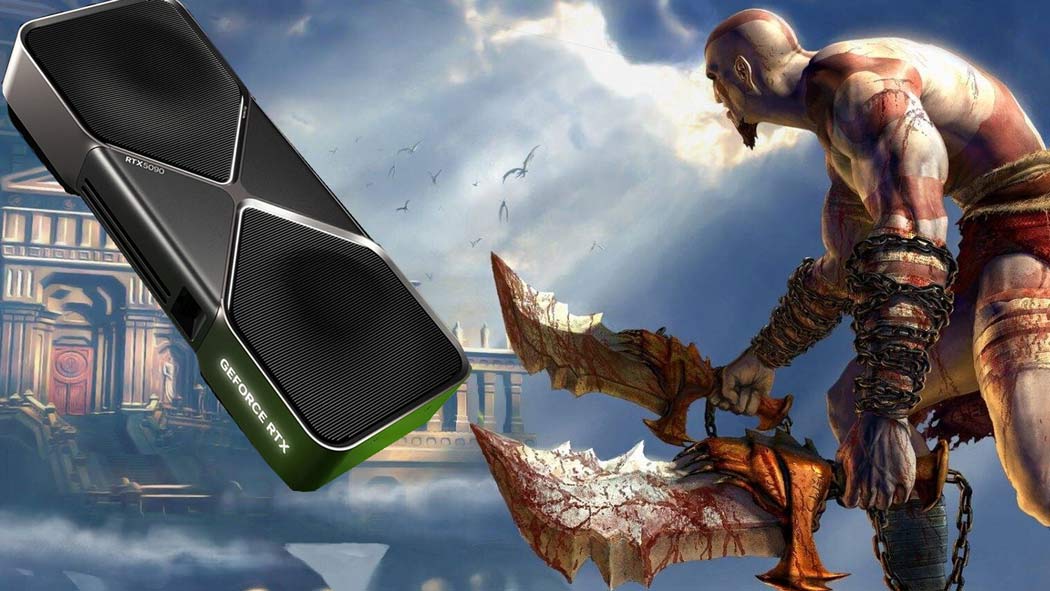

We can’t talk about DX12 and Multi GPU’s without discussing the beauty of the Square Enix WITCH CHAPTER 0 [cry] technical demo. Square or Microsoft didn’t confirm the engine used, but it’s likely it will be a modified version of the Luminous Engine (the very same engine powering Final Fantasy 15).

Square’s Witch demo was powered by four Nvidia Titan X’s, and in real time each scene featured a staggering 63 million polygons and 8k by 8k textures and running at a gorgeous 4k. It’s easily one of the most realistic renderings of human skin or emotion by a computer, and to think it’s being rendered in real time is staggering.

Dirt and makeup deformed as tears rolled down her face, water rippled in pools, and sun and shadows realistically moved across the scene. Human hair and organic looking materials such as feathers and cloth are notoriously different to render – but clearly, no one had given Square the memo, because the hair moved easily by itself, created by highly complex geometry and isotropic shading .

Her skin is created by multiple textures, layers of lighting and shaders all coming together to create realistic pits in the skin, dimples and pores, to make it all that much more real and human. ‘Skin tech’ like this isn’t particularly super new, Ready at Dawn demonstrated it in The Order 1886, for example – but not to this degree. The PS4 just doesn’t have the ludicrous GPU power to perform these calculations in real time, but if you want a bit of an understanding of what’s going on, check out our analysis.

Despite the usage of four Titan’s to power this, you’ve got to remember the demo was being rendered at 4k, and the absurd near reality. In a few years time, we’ll have GPU’s which will be about the power of two Titan’s anyway, which will be about when games and developers really take advantage of DX12

It’s difficult to be completely objective when reporting either of the two big new API’s, because from the perspective of a PC gamer, the future is incredibly exciting. Early on, much of the news and focus of DirectX12 was of course on the performance gains (primarily multi threading), and then as the team slowly implemented new features, such as Execute Indirect, PSO and of course, now improved Multi Adapter support, it’s hard to not dream about the worlds developers will be able to craft.

It’s difficult to be completely objective when reporting either of the two big new API’s, because from the perspective of a PC gamer, the future is incredibly exciting. Early on, much of the news and focus of DirectX12 was of course on the performance gains (primarily multi threading), and then as the team slowly implemented new features, such as Execute Indirect, PSO and of course, now improved Multi Adapter support, it’s hard to not dream about the worlds developers will be able to craft.

Hardware wise, there is an awful lot of new technologies either recently released or on the horizon, poised to take advantage of D3D12: 4K displays, Virtual Reality, new CPU’s (both Skylake from Intel and Zen from AMD), new GPU’s, including AMD’s R9 300 series, which was recently confirmed to be the first GPU ever to use High Bandwidth Memory, and that’s just the tip of the ice berg.

The first wave of games likely won’t be as impressive, where developers are slowly familiarizing themselves with new features, and old programming habits and best practices are turned on their head, and game engines must be updated. But this time next year, or in two years, and the sky really is the limit.