GDC 2015 is well underway, and Microsoft (along with other key developers) have taken to the stage to tout the benefits of DirectX 12. But the question is, have the earlier reports been overblown and the reality can’t possibly live up to the hype? Well, early indications are extremely encouraging indeed. We’ll be taking a quick look today at our initial DirectX 12 GDC impressions, but as more presentations open up over the next few days we’ll finish off with a nice in-depth analysis.

Firstly – the good news; DirectX 12 is largely complete and the drivers from all 3 major vendors (Intel, AMD and Nvidia) are also fully functioning. Microsoft estimates that by Christmas, 2015 about 2/3rds of PC gamer’s will own some form of DX12 hardware, making the new API a pretty sound investment from the point of view of developers. There’s 400 members who’re currently working on development with early SDK access, which equals to about 100 studios.

“Vastly greater developer control how your content can be rendered,” says Max McCullen, the development lead of Direct3D over at Microsoft. He had a big smile on his face, he knew he had the audience (primarily developers) eating out of the palm of his hand at this point. He continued his comments, pointing out that developers will be able to achieve “console level effiency, performance and control on the PC platform”. If you’re scratching your head in wonder, questioning what that means for Microsoft’s Xbox One he points out that there’ll be greater performance and access to the console hardware too; and a side benefit of this greater performance on mobile platforms too.

After some time, Kasper Engelstoft, Unity Graphics Engineer was bought on stage to recount his experiences of getting Unity to work on Microsoft’s new API. He pointed out that their studio got their hands on the first version of the SDK in September, 2014. They were lucky, their shader pipeline was already fairly compatible with DX12 (generating the correct byte code) and thus it took just a few weeks to get up and running (albeit, not well optimized). Following on from that, the team worked to get their internal graphics tests (which ensures that a scenes aesthetic is mirrored between DX11 to DX12) working on the new API. SDK 2 and SDK 3 each broke something, and required optimization – but it wasn’t anything they couldn’t handle.

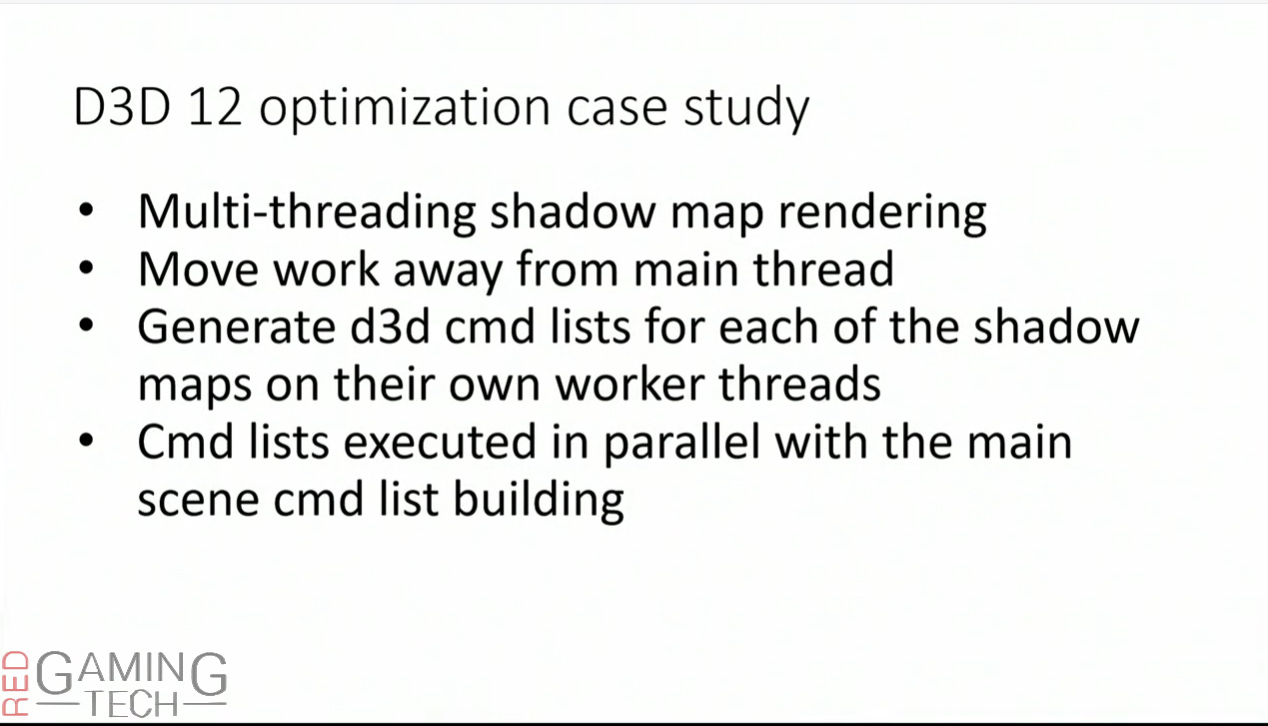

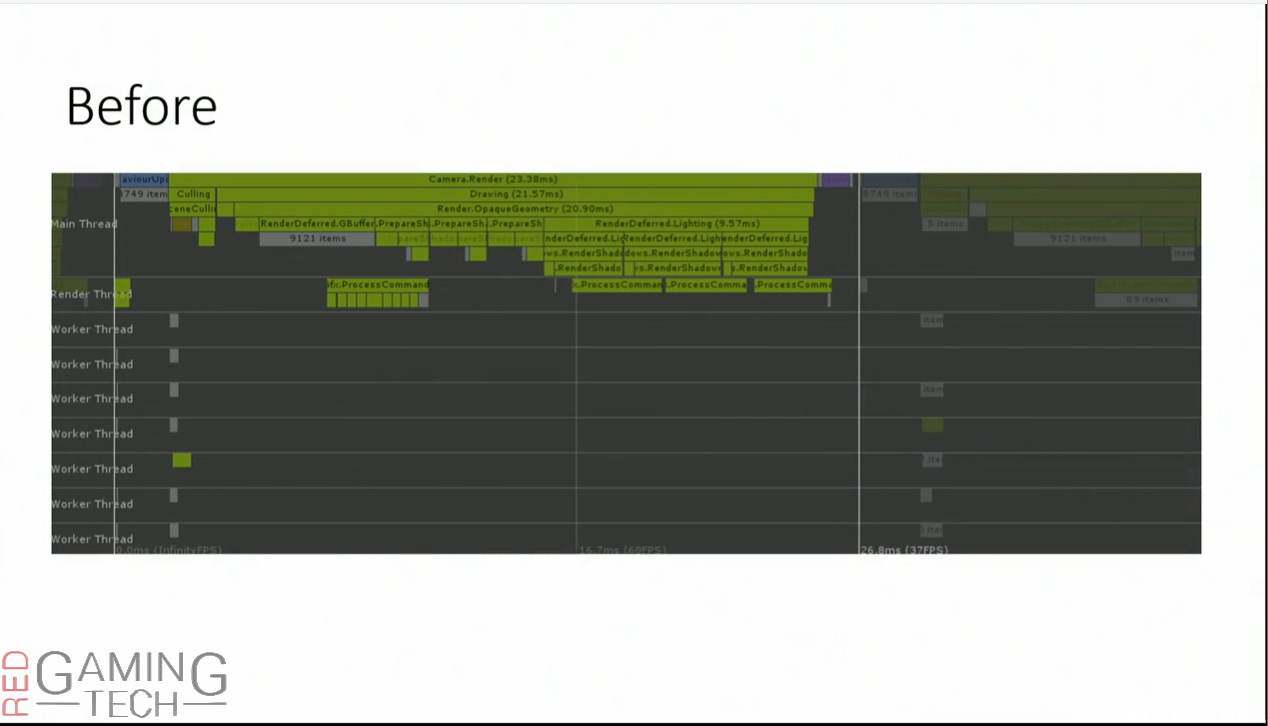

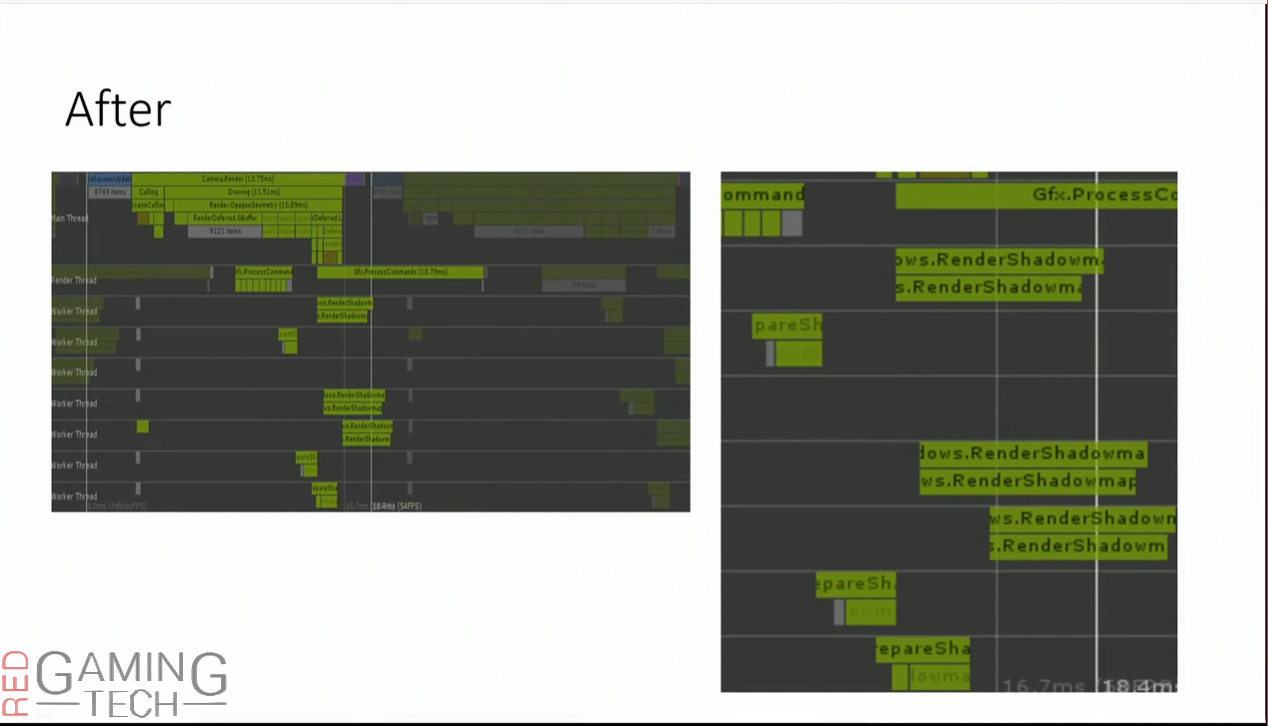

Kasper then decided it was time to show off performance, as that’s what people really want to see – right? He used a fairly simple and routine example, Shadow Maps in the Unity Engine. If you’re unsure what a Shadow Map is, at it’s most basic, it’s the shadowing of a scene. A shadows is created by testing whether a pixel is visible from the light source, by comparing it to a z-buffer or depth image of the light source’s view, stored in the form of a texture.

The great thing about Multi-Threading shadow maps is that it’s something developers can easily adopt to their existing engine (well, it was their experience anyways) and thus it’s very easy to get a huge performance gain because of DX12’s inherent features and leveraging multicore usage. An artificial test was constructed by Unity, comprising of 7000 objects being lit by 3 directional lights. Three shadow maps on the main render thread of DX11 and it took 23ms to render.

On DX12 the ‘main’ worker thread (rendering thread) builds the main scenes command list (basically, acting as a control) and then the worker threads generate the shadow maps command list. The data is then synced up and then executed. To translate at it’s simplest, the first CPU will run the ‘main’ workload of the graphics engine, and delegate the shadow generation to multiple other cores. These “worker” threads will then generate the commands (instructions) needed to send to the GPU and tell it what to render. Once all of these instructions have been generated, it’s all synced up to be sent to the GPU to execute. With the Shadow Maps being moved away from the ‘main’ thread and to these worker threads, render time dropped down to just 13ms, from the original 23ms – a huge saving of 10ms.

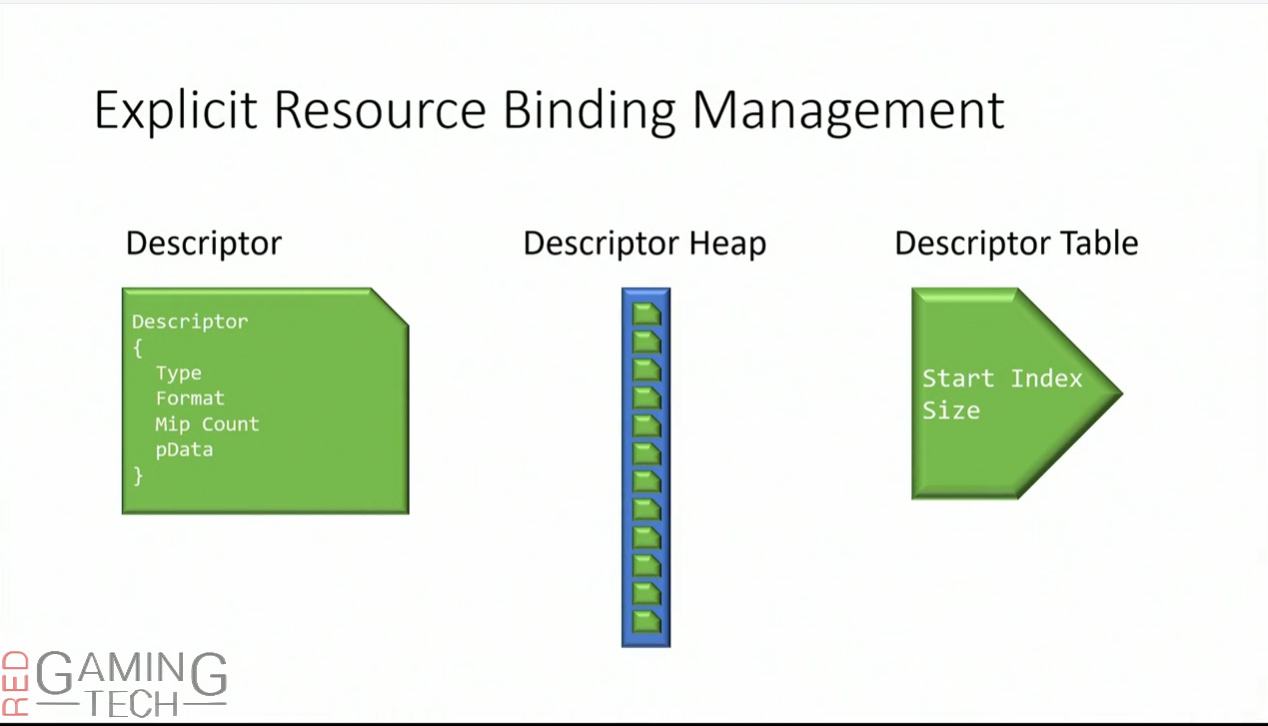

ExecuteIndirect was something Max McCullen was very proud of, as he eventually retook the stage. He pointed out that while Draw Bundles are very cool and all, he noted that the Graphics Command Processor (which is the processor on the GPU which takes the rendering commands from the CPU and then dispatches it to the GPU’s shaders and other fixed function hardware) has a tendency to be overwhelmed. Bundles work really well, they help to optimize the data.

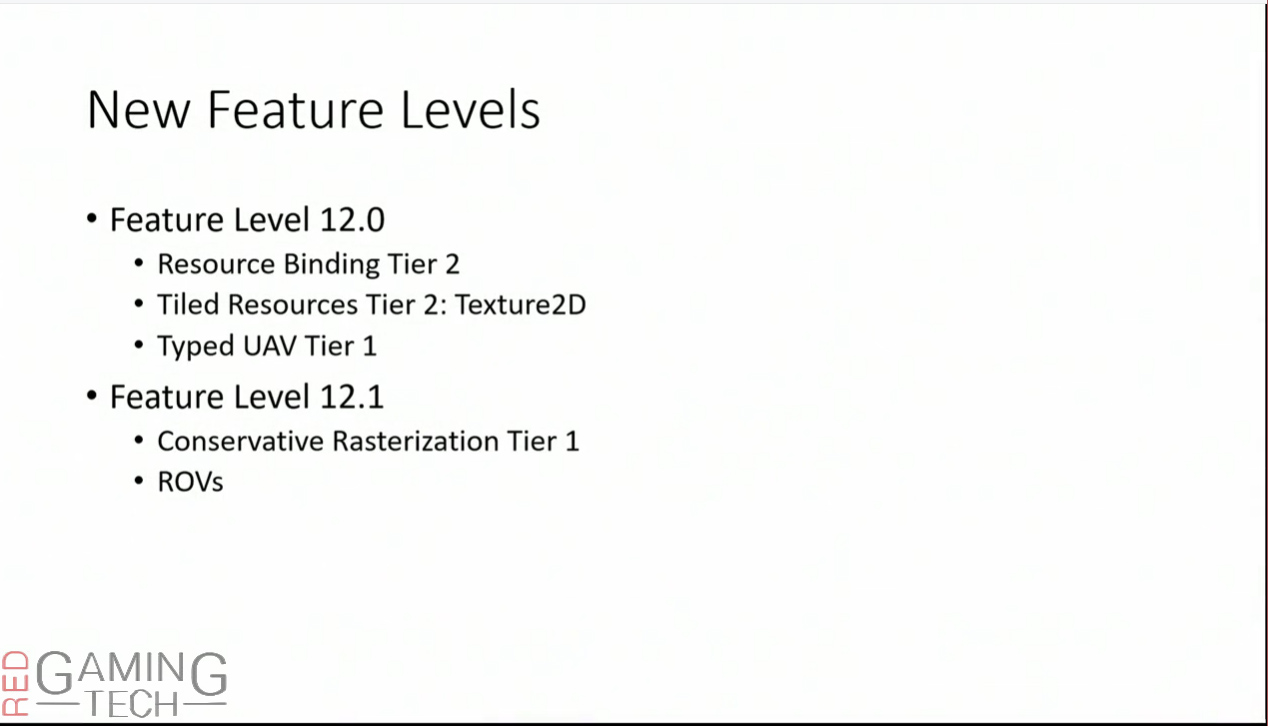

That said, there’s an argument for being able to generate draw calls and other such data directly from the GPU itself, and not need to involve the CPU in the decision. This has numerous benefits – the obvious one is a reduction in CPU overhead. But a variety of other potential benefits exist, including a reduction of PCIe traffic and less latency. GPU’s and their architectures have evolved greatly over a five year period, and have gone from being fairly ‘dumb’ pieces of hardware and incapable of really doing anything without a CPU to provide instruction to capable of being able to issue their own basic instructions. Microsoft now have a complete indirect and dispatch draw solution built into current versions of DX12, which runs on all DX12 hardware (DX12.0 and above).

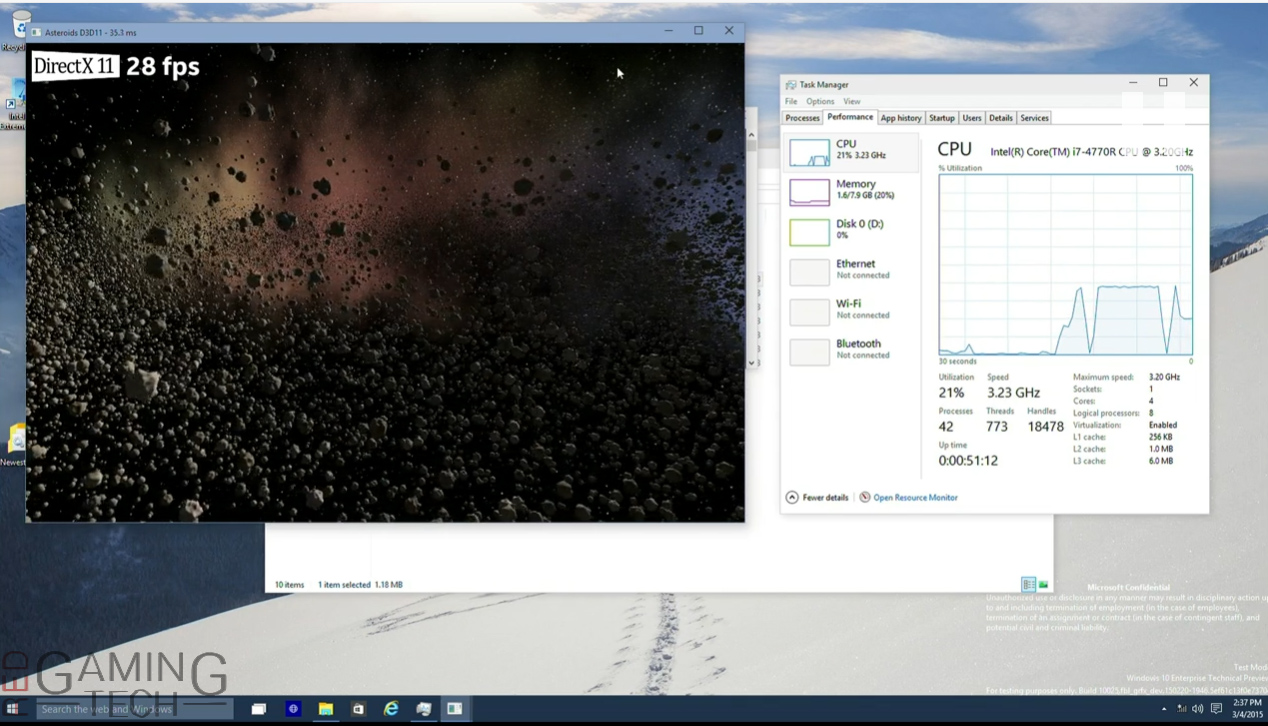

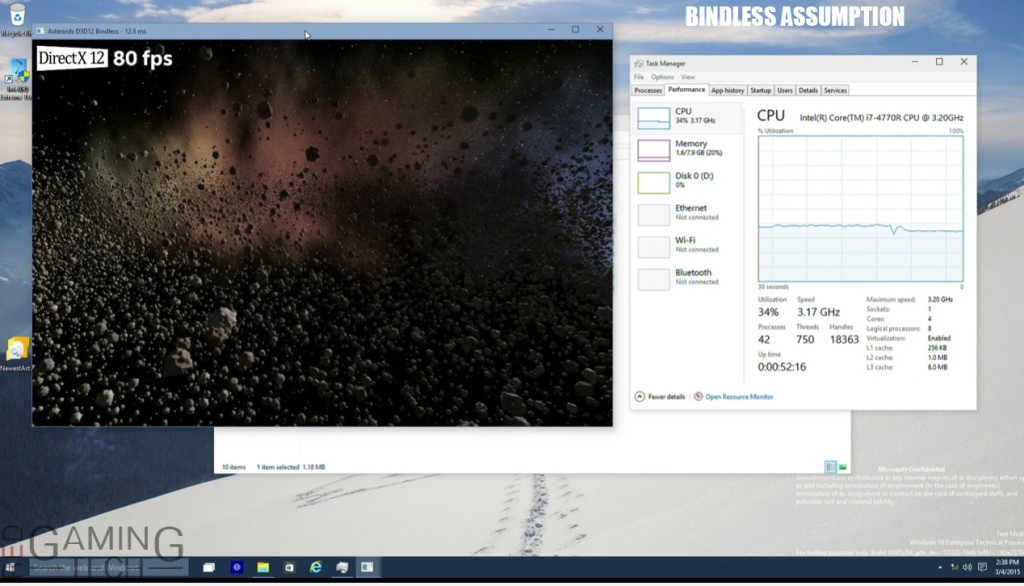

This allows you to generate multiple draw calls with a single CPU instruction, saving a massive amount of CPU usage. The indirect buffer can be either CPU accessible or purely GPU accessible. Eventually he loads up the Asteroids demo, which was originally constructed by Intel. There was a slight change here however, MS modified the code to assume everything was bindless and thus all of the shader resources were setup up front. Bindless operations expands the number of resources available to shader programs, and even dynamic indexing of resource available to the GPU. In DX11 there was a rather hefty limit

This means that each and every asteroid can be represented with a single call. You might remember that the Asteroids demo had each of the asteroids be uniquely drawn and had their own parameter. They weren’t simply ‘clones’ of one another.

Switching from DX11 to DX12 increases the frame rate by almost a factor of 3, from 28 frames per second up to the low 70’s, but CPU workload breaks over to multiple processor cores and becomes noticeably higher. However, if they indeed assume bindless then DX12 gains another ten percent performance, and CPU usage drops a little as a bonus. But there’s more: if execute indirect is used, performance goes up another 10 percent (so the frame rate is now 90FPS, up from the original 28 FPS) and CPU usage drops from 25 percent down to just 9 percent.

Fable Legends is next on the agenda – and a few demos were used to show off the performance boost of DirectX 12 versus the more traditional DX11. A live video was shown, DX11 was running at just 900P, while DX12 was running at 1080P, D3D12 was also slightly faster (a frame or two).

Finally, we’ll show UAV barriers always happening, exactly how DirectX 11 would do it, regarding its synchronization. Frame rate was fairly solid, and hitting the mid 40FPS range, and sometimes up to 50s. Switching to a more optimal UAV (unordered access views) patterns the frame rate jumps up to 64 FPS (obviously depending what’s going on at the time). A UAV can be thought of as a little bit like a Pixel Shader render target, but there are a number of key differences, mostly that they aren’t necessarily in a specific order and support atomic operations. To go more into detail of UAV’s is out of the scope of this article, so look for more info here.

While this is just our initial analysis (and regular readers and viewers will know it’s not as in-depth as normal) there’s a lot to think of, and frankly it’s all very exciting. Microsoft tell us that we’ll be seeing a bunch more Xbox One related ‘stuff’ be announced over the next few months for the Xbox One. What we do know is that major developers and engine creators are working with Microsoft.

Tim Sweeney (from Epic Games) appeared on stage with Phil Spencer (in a different conference) and said, “Epic will be working closely with NVIDIA and Microsoft to create a world-class implementation of DX12 in Unreal Engine 4. DirectX12 is a great step forward, exposing low-level hardware functionality through an industry standard API to give developers more control and efficiency than ever before.”

Microsoft wants DX12 to be a console like API, spreading the workload over multiple CPU cores, but also making it easier for developers to target a wider range of hardware… and naturally port titles over to the Xbox One too. There are likely some issues with all of this – it’s highly possible that if a developer doesn’t test their code (or engine) well enough on D3D12 it’ll have interesting results (read: crashing).

“Xbox One games will see improved performance and we’ll bring the same API to all Microsoft platforms,” said Microsoft’s Anuj Gosali. What this means for the Xbox One in terms of raw performance is still a mystery. Looking over this information and it’s tempting to get really excited for the future of the Xbox One, but the problem is that it’s specs aren’t changing. 1.32TFLOPS (the GPU performance of the console) isn’t going to improve – but the efficiency it operates with can. In other words, it’s possible Microsoft will be able to get more performance out of the same hardware.

For PC’s, there’ll be a preview of DirectX 12 due out later this year – and of course, most PC gamer’s are really more looking forward to seeing how Windows 10 integrates into everything.

The next year or so will be an extremely exciting time in both the PC and console space, but be sure to check back soon once the dust has cleared to enjoy our full technical analysis.

UPDATE: We’ve covered some DX12 Xbox One information here which also goes into performance increases of the Xbox One’s ESRAM.

[schema type=”product” url=”https://redgamingtech.com” name=”DirectX 12 GDC 2015 Technical Analysis & Performance Breakdown” description=”An early look at the technical differences of DX12 and the performance benefits it will bring to both the PC and Microsoft’s Xbox One.” brand=”DirectX 12 or D3D12″ manfu=”Microsoft” ]