This year is shaping up to be an incredible year in gaming and technology, seeing the release of 14nm graphics cards, the first DirectX 12 and Vulkan titles, Virtual Reality headsets and a whole lot more. With this in mind, we decided to reach out to AMD’s Head of Global Technical Marketing, Mr. Robert Hallock to pick his brains on developments in the industry.

Question 1: Were you happy with the live crowd and the internet’s reaction AMD received during the Capsacian event at GDC, particularly with the Virtual Reality showcase and the sneak peak of the Polaris GPU?

RH: Feedback seems to be pretty positive! I don’t think people were really expecting us to show off a second desktop GPU based on the Polaris architecture, so the surprise was nice and the chatter afterwards has been pleasing.

Question 2: During Capsacian, Mr Koduri placed a lot of emphasis on “Performance Per Dollar” and wishing to bring that back to gamers because the price curve no longer follows Moore’s Law if you go to the bleeding edge chips. Could you elaborate further as to what AMD are planning?

RH: I can’t go into more details, yet. 🙂

Question 3A: It was reported during Capsacian that the Polaris 11 GPU was running 4K VR video content passively (without an actively running fan) – which is rather impressive. Can you provide some insight into how this was achieved and what potential uses AMD believes this might have for different users? (ie, gamer’s, streamers, professional uses…)

RH: It all comes down to performance per watt, or power efficiency. The more performance you produce for every watt of power consumed, the easier it becomes to explore wild use cases like fanless VR. We’ve achieved a >2X performance per watt improvement with Polaris, so there is headroom to explore this stuff. For everyday users, that kind of power efficiency will manifest as cool and quiet operation at load.

Question 3b: The Hitman demo was quite interesting – albeit rather short. Can you confirm Hitman was played at max settings, DX12 1440P on prototype hardware (I believe Polaris 10) and was actually capped at 60FPS. This had lead folks to speculate the GPU was / is capable of much more, especially on final production hardware. Are you able to comment on this?

RH: You are correct that this was the first public showcase of Polaris 10 running DX12 1440p. However, I cannot confirm more than that at this time.

Question 4: Speaking of Polaris as a whole, could you elaborate on the new naming convention of Radeon graphics cards? Will this mean we will not see the likes of the R9, R7 and instead GPU’s simply be labelled Polaris 11, or is this still being decided?

RH: They will still be called Radeon. Polaris 10 and 11 are our codenames for the physical graphics chips themselves, in isolation from being attached to a PCB.

Question 5: Continuing the Polaris theme – the GPUs shown so far small in comparison to many traditional graphics cards. Are smaller board designs something AMD will prioritize in the future, particularly because of both GDDR5X and HBM?

RH: Much of a GPU’s PCB size is dictated by power circuitry and the routes that link it all together. If you have a more efficient GPU die, then you don’t need quite as much power circuitry and the board size can be reduced. That’s been historically true for a long time, and will be no different with Polaris.

Question 6: What are the primary benefits of the switch to 14nm FinFet? We’ve heard much made of the huge performance per watt (PPW) gains over the older 28nm technology found in current GPUs.

RH: The big benefits are power efficiency and manufacturability. On every point along the clockspeed vs. power curve, FinFET is notably better than 28nm. And since FinFET gates are smaller than 28nm planar gates, you can simply produce more chips per wafer.

Question 7: Now DirectX 12 is finally here for end users with playable games, how does AMD feel about the APIs adoption rate and performance?

RH: The DX12 adoption rate is on track to be the fastest ever. We know that is true based on our discussions with software partners, comparing what they’re telling us to the historical record for DX9 or DX11. The performance for AMD products has been exceptional: Hitman, Ashes of the Singularity, Quantum Break… all of these DX12 games have produced runaway wins for Radeon GPUs, and asynchronous compute only widens the gap.

Question 8: It’s my understanding that Vulkan was pushed forward with a lot of the backbone of AMD’s Mantle API – are you happy with the progress of Mantle and the open source nature of the API? How do you hope it’ll evolve over the next 12 or so months?

RH: You’re right. We donated the API specification from Mantle to the Khronos Group to jumpstart the process of bringing Vulkan to life. Being iterated on as an industry standard represents one of the “victory scenarios” that we envisioned for Mantle at birth, and we’re thrilled that it happened. Now we’re just looking forward to working with ISVs on expanding the library of games based on Vulkan; we’ve already done some good work with the team behind the Talos Principle, too.

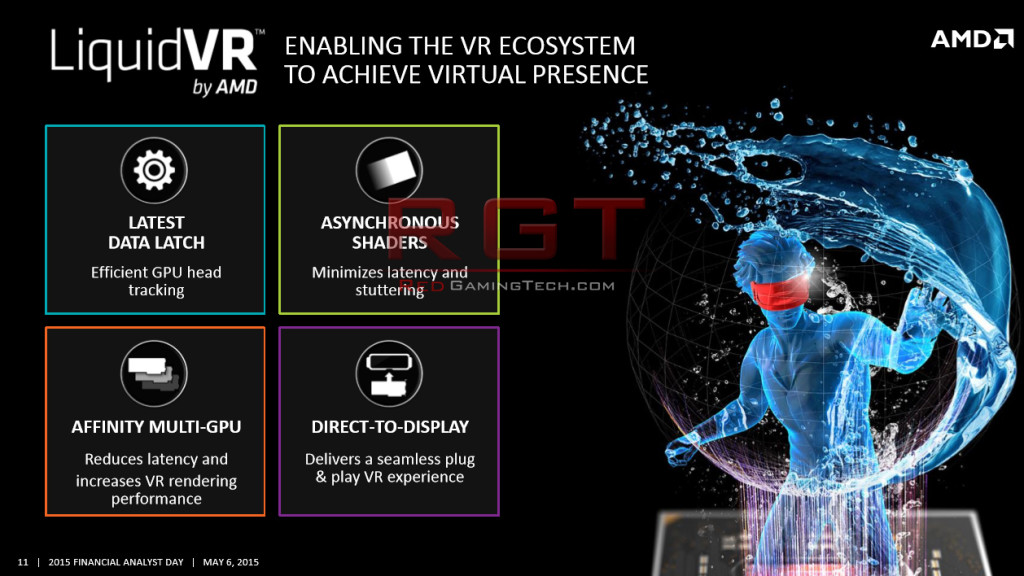

Question 9: Mantle’s API will live on for such tools as Liquid VR, is this correct?

RH: That’s correct. Mantle was born as a flexible API to solve “challenges” in graphics. When Mantle was conceived, the big challenge was the draw call. How do we get more than 7000 draw calls in a scene? Of course, Mantle could do >100,000 draw calls, and every major graphics API has now been reconfigured to follow the path that Mantle blazed. The draw call isn’t a big challenge anymore. Now VR is the big “challenge” in graphics: smoothness, immersiveness, latency, presence. Mantle is great at those things. Adapting Mantle in many ways to underpin LiquidVR was an obvious but critical step forward.

Question 10: AMD’s GPUOpen is a pretty brave decision, for placing the code in the public domain and allowing developers to download, tweak, modify and use as they need. Can you give some insights into both the goals of GPUOpen and why AMD decided to release the code completely opensource?

RH: Everybody wins when the full source code to a game is known. Developers win because it is easy and straightforward to solve a bug, implement a cool effect, or optimize performance for any architecture. Gamers win because they can get a fast, stable, good-looking game. And we win because we learn a little more about how developers think and what they need when the code is transparent and discussable. I think we can all agree that this is a win/win/win, and that is why we challenged the industry with GPUOpen.

Question 11: Shifting to Virtual Reality – watching the Solon Q and other demos during GDC makes me excited for the future. Are AMD happy with the rate of adoption and support it (VR) is receiving? Do you believe the next year or two are an almost “testing phase” as developers experiment with the hardware and uses, and hardware itself improves (such as higher resolution headsets and costs are reduced for end customers)?

RH: Let’s be clear: the testing phase has already happened. We’re now in the first generation of consumer headsets, and already the appetite is sensational. Everywhere you go: to gamers, to developers, to hardware companies, to content creators—everyone is over the moon about getting into the VR game. The genie isn’t going back into the bottle! Now our goal is to show people that Radeon hardware is more than capable—today and tomorrow—of delivering what’s needed for unblemished presence on the Vive and the Rift.

We understand that we’re one of just two vendors with the hardware portfolio to power these headsets. That’s a supreme responsibility, and it’s not lost on us. But Raja’s vision for the hardware and software demonstrates total awareness of the problem, the solution and the path. It’s inspiring, and it’s already clear to me that we’re on the right track when I put on a headset and have a frustration-free experience with a large chunk of our GPU portfolio.

What’s also true about VR is that we all win when the ecosystem gets bigger. So we want to make it very easy for less hardware-aware gamers to get in on the fun, too. That’s why we unveiled the VR Premium brand at our Capsaicin event. It’s an at-a-glance way to say “yep, this hardware definitely meets the requirements for VR gaming.”

Question 12: During Microsoft’s GDC Presentation DirectX 12 Advancements, they reiterated their support for HDR and claimed adoption rates for displays (particularly into 2017) would be likely faster than 4K. Can you explain the benefits for HDR for gamer’s and also how AMD are planning to embrace the technology and are games developers interested… finally what is the performance hit of full HDR compared to say 4K?

RH: I’m a display snob, so HDR is one of those technologies that I just can’t get enough of. Better contrast, blacker blacks, whiter whites, sharper pictures, more nuance, more colors, more vivid colors, more accurate colors—HDR is a huge an obvious improvement in every conceivable way to measure the performance of a display. It’s a technology that just makes you say “whoa” when you see it for the first time, and then it’s really hard to go back to the SDR TV or monitor you have at home. If you’ve ever participated in a TN vs. IPS debate, HDR is right up your alley.

Those picture quality benefits extend to any sort of content that is HDR-aware, which can include movies, photos, and games. Just imagine if nighttime scenes were as detailed and nuanced as daytime scenes. Imagine seeing shades of red, gold and cyan you’ve never been able to see before. Imagine moonlight and starlight that’s crisp and pure, that doesn’t turn the dark night sky a weird shade of grey. This is all possible with HDR, and GPUs based on the Polaris architecture will support it. We plan to support HDR on Fury, Nano and the R9 300 Series too.

HDR has little to no impact on the overall performance of a game, because games already render in HDR color spaces inside the engine. A process called tonemapping compresses and remaps colors in finished frames so they can be shown to you on a standard SDR TV or monitor without appearing perceptually inaccurate. We’re working with game developers to establish more intelligent tonemappers that can identify the capabilities of an HDR display and tonemap the content to an HDR “profile” that perfectly matches what the display can do. As with any other monitor, the better your display is, the better this process will look. But in my opinion, any HDR display looks much better than even the best SDR display.

Question 13: Unsure if you can answer this question (so just give an N/A if you can’t) but reports have popped up that Polaris may not be using High Bandwidth Memory 2 and may only see HBM2 for Vega 10 – you confirm or speak more about this?

RH: N/A for now.

Question 14: I’ve recently had the opportunity to review an AOC FreeSync monitor (an AOC G2460PF), and was quite the fan. It was my first real experience with either a FreeSync or G-Sync display, and really loved the lack of tearing and visual quality. Can you comment how AMD feel about FreeSync’s position in the marketplace and how future monitor technology will improve?

RH: FreeSync is in an excellent position in the market. In 13 months, the technology has grown from three supporting monitors to over 50 supporting monitors. We got LCD Overdrive working within 30 days of launch. We have multiple partners that reach down to 30Hz to cover the full range of playable framerates. We have Low Framerate Compensation, which for nearly 20 FreeSync monitors can sustain smooth gameplay down to around 25 FPS. There are 4K FreeSync monitors, ultrawide FreeSync monitors, 1440p FreeSync monitors, 1080p FreeSync monitors. And many reviewers have noted that the average FreeSync display costs less than the average G-SYNC display. That looks like total victory to me.

Question 15: What technology and games are you personally looking forward to over the next 12 months?

RH: I really want a Polaris 10-based GPU, an HDR FreeSync monitor, and a copy of Deus Ex: Mankind Divided. I imagine I’ll also get sucked back into World of Warcraft when Legion arrives.

Question 16: During the interview we’ve spoken a lot about power efficiency, GPUOpen and Virtual Reality. With this in mind, can you provide insight what AMD’s goals are in the future regarding graphics technology? Where do you predict the market will be going?

RH: We believe that graphics is now entering into the era of the deep and immersive pixel. It’s not enough, now, to simply drive a huge whack of pixels at playable framerates. Those are table stakes. It’s still a mission to go ever faster, of course, but the industry is starting to weigh more heavily the value of packing more information into every pixel too. Those pixels have to be more colorful and interesting than they’ve ever been.

That’s where technologies like HDR and VR come in. From there, the trajectory is incredible: we want 16K*16K pixels per eye at 240 FPS. That’s enough pixels and responsiveness to exceed retinal acuity. We want HDR panels with such incredible dynamic range that the human eye cannot distinguish between the panel and looking at the same scene out a window. All of this is achievable in our lifetimes.

Question 17: Backtracking to the Capsacian event for a moment – Mr. Koduri spoke that he believed the future would very much be the “immersive era” which would a combination of high performance hardware (particular GPUs because of their highly parallel nature) and game engine. Can you give insights into how the immersive era might impact end users?

RH: mostly answered in 16.

Question 18: (unsure if you can answer). During the Capsacian event, AMD’s Radeon roadmap was show off, with Polaris being in 2016, Vega in 2017 and Navi beyond that. There are rumors concerning the fact Vega is in fact the previously known “GreenLand” – can you confirm or deny this?

RH: I cannot discuss the roadmap beyond what was shown on the slide.

Question 19: (probably unable to answer) – Navi mentions “Scalable Architecture” and NextGen memory. I realize you can’t tell me the number of shaders, process and the other exact specs, but can you give any insights as to what those terms (Scalable and Next Gen Memory) may mean?

RH: Nope! 🙂 But we will. One day. When we’re ready.

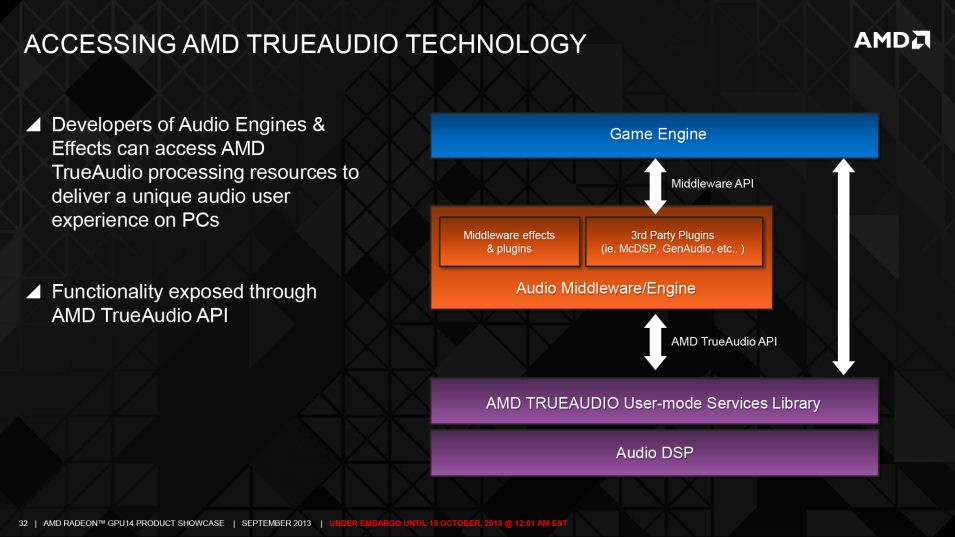

Question 20: I understand AMD are still pursuing TrueAudio technology, but I’ve heard the technology is primarily used in games consoles. Can you confirm this is the case, or if you will continue pushing TrueAudio for the PC?

RH: We demonstrated TrueAudio technology on Polaris at Capsaicin. We believe that virtual reality especially benefits from rich, nuanced and positional soundscapes.

Question 21. AMD are putting a lot of work in to HCC and open compute platforms, and offering HIP to port source CUDA code to C++ (to run across multiple architectures, including AMD and Nvidia). How has the initiative been received by users ?

RH: I do not work in professional graphics, so I cannot really answer this question.

Question 22: Last time we spoken, you helped explain what Async. Compute was and now that DirectX 12 has dropped we can truly start seeing the benefits of the technology. Can you explain a little as to why AMD decided to include ACE (more info) in GCN hardware and are you pleased with its adoption with developers?

RH: We predicted that GPU compute would become popular for PC gaming in 2009 or 2010. Our engineers recognized that setup for effects like lighting, shadowing, reflections, physics, AI, and DOF (Depth of Field) could be extracted from the graphics queue and parallelized. It was a route to “free” performance. That guided our hand to bet on the ACE in Graphics Core Next.

Next we ensured that this hardware was accessible on the consoles to prime the pump in game developer land. Then we brought Mantle to life on the PC, to train PC game developers on how to access the ACE and use asynchronous compute for PC gaming. Then came DX12, and then we donated Mantle to the Vulkan project.

And here we are! Radeon is offering incredible performance in DX12 applications, and we’re excited to do the same for Vulkan as that API grows. Our five-year plan has begun to bear fruit for our customers and the wins just keep coming.

Question 23: With both the PlayStation 4 and Xbox One being developed on for sometime now, how do AMD believe their existence has benefited the PC – or indeed AMD (aside from financially). It’s my understanding for example (linked to above question) that Async Compute was being used a lot in both the X1 and PS4 for multiple titles?

RH: You nailed it. As we move into the DX12 and Vulkan era, you’re seeing how GCN presence in the consoles has cultivated intimate familiarity with the ISA amongst game developers. Every developer cut their teeth on things like Async Compute in the console space, and they’re now porting that knowledge to the PC space to improve the PC gaming experience.

Question 24: How much work does it require on the part of developers to take advantage of Asynchronous Shaders for meaningful performance gains?

RH: We’ve had reports of developers getting the feature up and running in a weekend. Naturally, more extensive concurrency is encouraged by a forward-thinking and modern engine that can dispatch a lot of multi-engine work (e.g. Nitrous engine). But presuming that infrastructure is in place, it’s not overtly challenging and lots of new engines and engine rewrites are coming online for the new graphics APIs.

Question 25. One of the big problems with Virtual Reality is latency – and the it can cause users to feel sick. How are AMD solving the problem with LiquidVR and other tech. and how do you think this will evolve over time? Even Futuremark have recently released a benchmark which highlights these issues (VRMark).

RH: I’ll quickly give three examples of how LiquidVR addresses the latency challenge for VR.

1) Latest data latch: https://www.youtube.com/watch?v=e_o22yJOgkg

2) Affinity multiGPU: https://www.youtube.com/watch?v=7VtAilKwoLI

3) Asynchronous shaders: https://www.youtube.com/watch?v=v3dUhep0rBs

That’s a lot of video to watch, but you can see how we’re tackling latency through multiple software vectors on top of simply making faster GPUs.

Thanks a lot to both Robert and AMD for taking their time in helping answering the questions! We’ll try and follow up when AMD finally unveil Polaris!