If I mentioned the term “Machine Learning”, there’s a good chance you start talking about how a Neural Network will become self-aware and deep humanity a threat in judgement day, but the reality is that AI is an extremely broad term and in gaming, it’s way more important than its ever been before.

PC gamers have long heard the term of DLSS (Deep Learning Super Sampling), and if you’re less familiar with the term it essentially makes use of Machine Learnings training and inference to run code on your GeForce graphics card to upsample games from a lower resolution to a much higher resolution, all while having the benefit of saving a lot of performance.

I want to quickly run over how this works, and then go into how I think consoles and AMD’s own technology (FidelityFX Super Sampling) will change gaming for the better, and how native pixels no longer have a place in judging the image quality.

The Training part of Nvidia’s DLSS is just what it says on the can, using high-performance supercomputers many times more powerful than anyone can ever hope to have in their homes (outfitted with tons of CPUs and several graphics cards, all networked together into huge supercomputer clusters). These systems use ultra-high-resolution reference images of a game (say the new COD) at a resolution such as 16K, and then take lower resolution footage (at say 1080P) and the neural network has the job of making the low-quality image look just like the high-quality reference image.

Obviously, at the start, it isn’t great at this task, but eventually, the neural network starts to learn, generating images which are either close to, or in best scenarios, look better than, the original reference work. The training part is the computationally difficult part, and after this, all of that data becomes just a very small bit of trained code, and can be run on your home GeForce cards, in what is known as “Inference”.

I’ve covered DLSS and the quality of it on the channel before, and I’ll leave it down below, but consider that a brief of the basics of how DLSS works.

For the Playstation 5 and Xbox Series X, there’s a lot of discussion of 120FPS, 4K, ray tracing and all of the other usual buzzwords which have been flying around since the hype for this generation started, but looking at the GPU performance uplift from Xbox One X to Series X, and we can see that 6TFLOPS to 12 isn’t a huge uplift.

Architecture efficiency has a big role to play here, and indeed, the work that RDNA 2 can do per clock is considerably more than the Polaris based architecture found inside the Xbox One (see this image where we’re seeing what RDNA 1 can do versus Polaris with similar GPU configurations), and Series X also has a much better balance for Pixel Fill Rates, and Geometry, ie, it can do more with those 12 TFLOPS of RDNA 2 than what a 12TFLOPS Polaris based GPU could achieve, but still, the raw performance metrics are hard to ignore.

The RX 5700 features Navi 1x (RDNA 1) with 36 Compute Units, and the RX 480 is based on the Polaris architecture, also with 36 Compute Units. You can clearly see the difference in the performance of the two graphics cards, despite the actual raw TFLOPS at the same configuration being identical. That is, 36 CU x 64 Shaders Per CU x Clock Frequency, and this formula is consistent on either Polaris or RDNA 1.

Thankfully, Microsoft and Sony have access to a lot of technology which helps maximize the performance of the GPU, with lots of additional technology. In a previous video, I detailed DirectX 12 Ultimate and why it was so important, so I’ll leave a link to that too, as we covered topics such as mesh shading.

The take away from that video though is while technology such as Ray Tracing is so important, efficient control over the rendering pipeline (which on the PC and Xbox is via Mesh Shaders, and on PS5 is driven more by the Hardware-based Geometry Engine and Sony’s own shaders) actually enables this.

Culling geometry which falls outside of view (either outside of screen space or simply blocked by another piece of geometry), shading objects in motion in lower quality are all in the name of freeing up GPU performance, which in turn can be used for technology such as hardware-based Ray Tracing, but as you’ve likely figured out by now, image reconstruction is perhaps the biggest part of this puzzle.

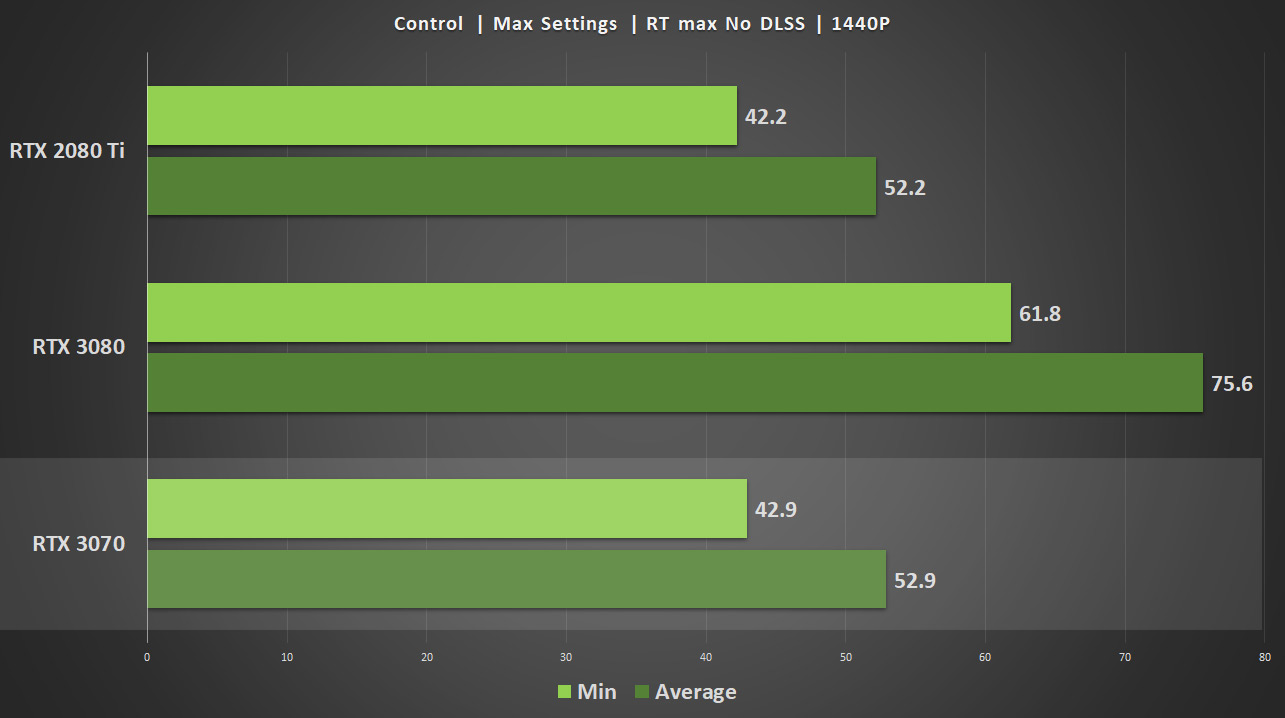

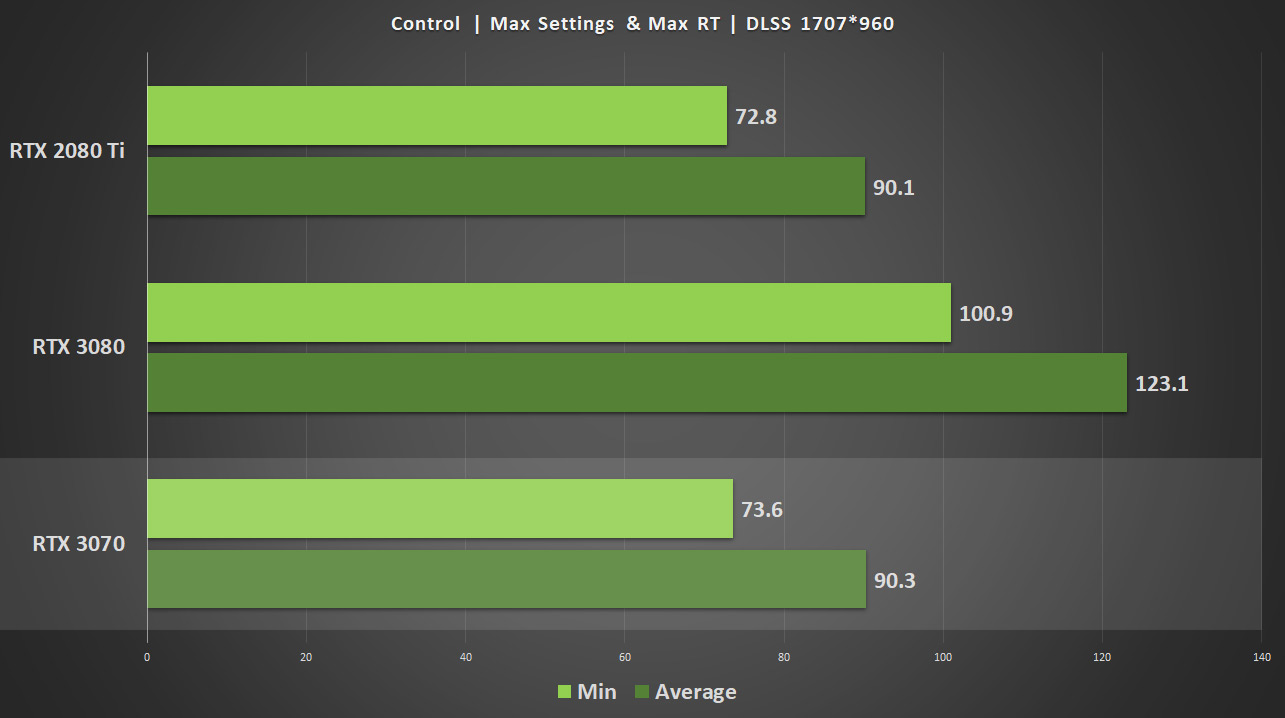

4K is 3840×2160 pixels, which is 4x the number of pixels of 1080P (1920×1080), a huge increase in the amount of stuff that the console or PC graphics card is being asked to draw per frame if we’re talking about native resolution. Here are a few benchmarks of a couple of PC games on an RTX 3080 without DLSS or Ray Tracing enabled, and you can see just how heavily the toll is on increasing the resolution. Throwing DLSS into the mix suddenly means that even enabling hardware-based ray tracing is possible, and at much higher frame rates too.

What does this mean? Well, the image is being rendered at a much lower resolution, of course, but then machine learning does its magic, and ‘guesses’ details, filling in fine detail like blades of grass, characters hair and facial details and world geometry. Just as importantly though, because Ray Tracing or more traditional rasterization is occurring at a much lower number of pixels, the frame rates are higher.

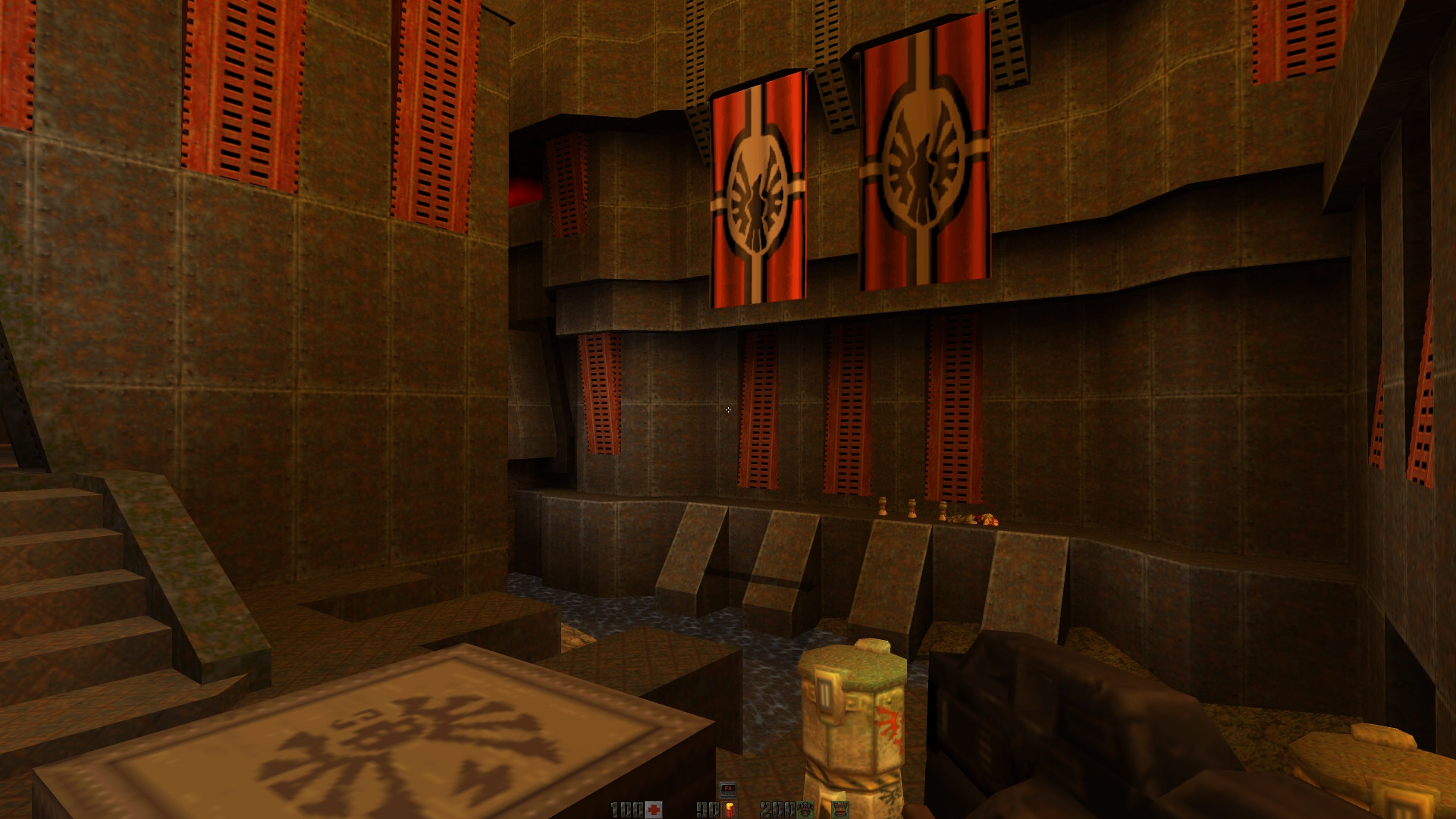

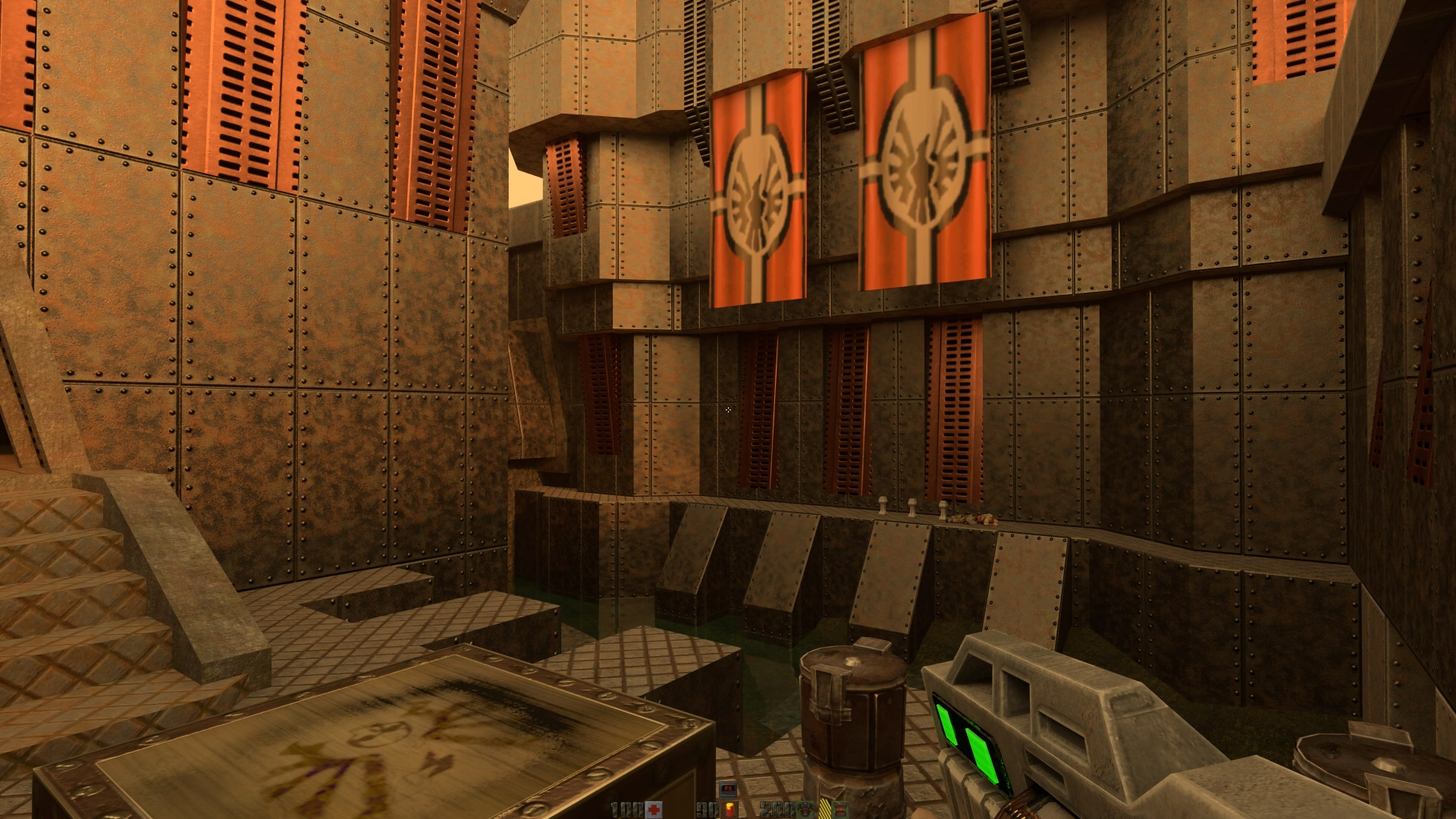

To put it another way above is a capture of Quake 2 (not the RTX version) running at 4K (below is a photo of Quake 2 running in RTX mode, which looks improved, but again, there’s only so much you can do given the starting point of a late 90s game engine.

Does it look better, than Death Stranding, with DLSS or without DLSS? Of course, the answer is no.

Let’s take a look at Call of Duty Black Ops Cold War, both with RT set to the highest with DLSS (you can see it here with the highest frame rate). Notice the floor reflections, the subtle interaction of shadows and object reflections. Looks pretty good, right?

The exact same scene, but this time both RT and DLSS are disabled. On the GeForce RTX 3080 I am testing this with, you’ll notice that the FPS has dipped from mid 90s to just 61 FPS, despite lack of hardware based RT. Enabling RT without DLSS at 4K with these settings really hurts FPS, dipping to sometimes below 30 when things become hectic.

So with CoD, we have 30FPS more, ray tracing and of course, using DLSS to reconstruct the image.

Perhaps with the examples you’ve seen now, you’ll start to get a better understanding of the point. Higher performance, and virtually no difference in actual image quality. But yet, people (especially in the console space) continue to think of non-native 4K (or other target resolution) as a failure on either the hardware or the developers, when in reality, there’s a finite amount of resources which can and must be taken advantage of in a smart way.

AMD has recently confirmed that RDNA 2 for the desktop leverages upsampling FidelityFX SuperSampling for its answer to Nvidia’s DLSS, and although details are scared for now (and likely will be until later this year or early next year, as the feature isn’t launching with the cards, but instead an updated feature through drivers and software), what we do know is that the basic skeleton of this technology is possible for games developers to leverage for Playstation 5, Xbox Series and of course PC architectures too.

Microsoft has been heavily invested in Machine Learning (DirectML) for some time to its end, and from what we’re able to piece together, the efforts of AMD, Sony and Microsoft seems to be different in its implementation to Nvidia. With the frames being upsampled from a lower resolution in Forza. The discussion about this from the developers is rather technical and dates back before the Xbox Series consoles hit store shelves, but the basic gist of the message is clear, save performance and crank up the quality of each rendered frame, then use upsampling technology to improve the visual quality.

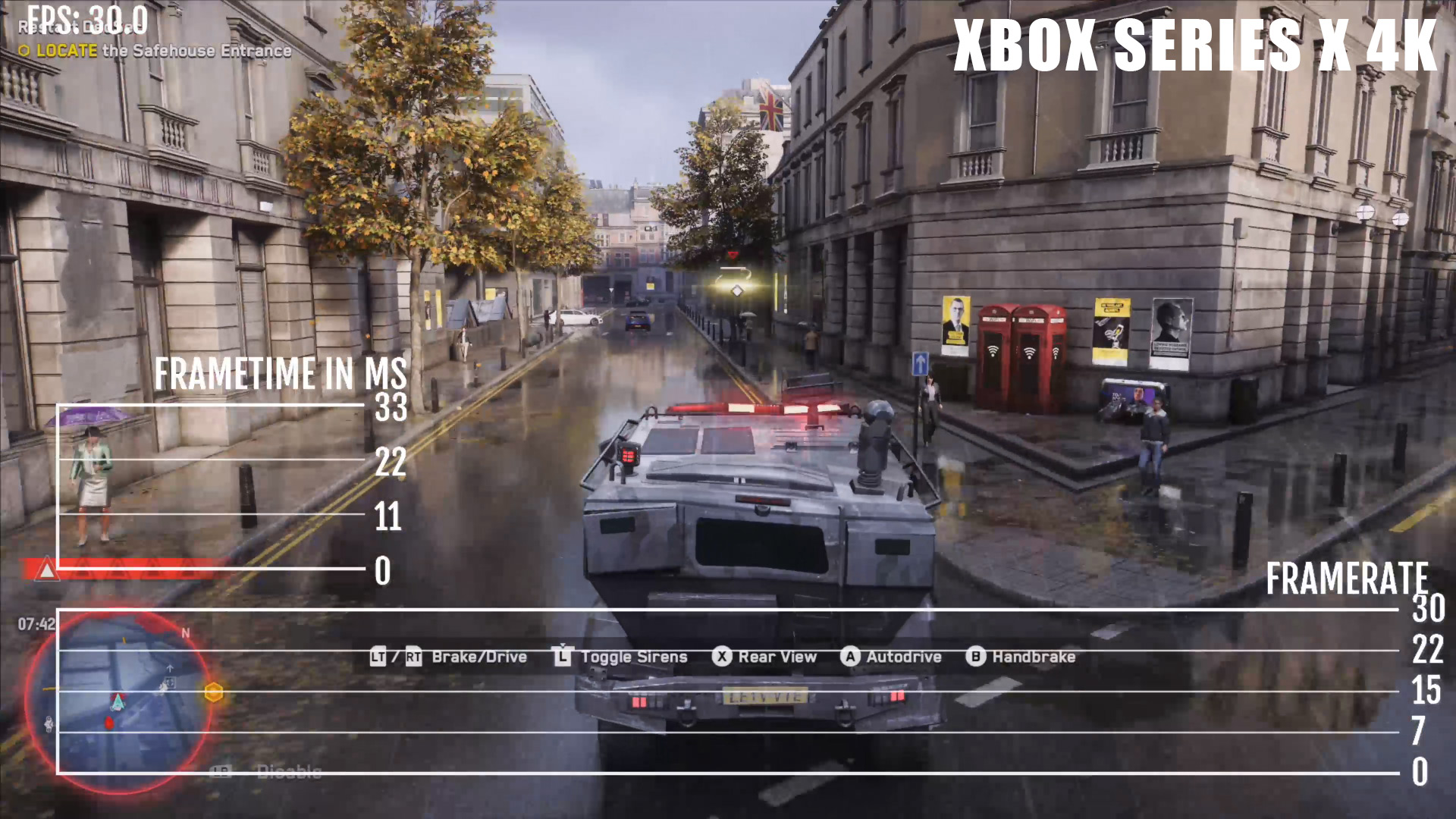

Let’s take a look at a few games and their performance results, with Watch Dogs Legion and Control. There’ll be more comparisons and analysis coming soon from me on the consoles, but these two titles illustrate a fairly good example of games which are very demanding for next-generation console (and PC) hardware.

The Xbox Series X version of Watch Dogs uses custom settings which aren’t present in the PC version, with a series of settings you could best describe as a mixture of the higher PC settings in general, albeit with hardware-based ray tracing set to medium, and more liberal uses of more traditional raster techniques to save performance, and limiting the number of bounces per ray.

Thanks to the PC version of the game, we know that the console version, as well as PC, renders the ray tracing internally at a much lower resolution (just 1080P, even if you’re running the PC game at 4K) in an effort to save pixels (basically a sort of checkerboarding upsampling is used on the ray tracing, even on PC).

Watch Dogs on PC does also support DLSS too, which saves a lot in performance, allowing you to crank the quality settings much higher than would be possible with a native image, as Watch Dogs with Ultra quality, particularly on DLSS is nothing short of very demanding.

In the two above images, much the same story with Watch Dogs as we see with Call of Duty. 62 FPS for DLSS and RT maxed upsampling to 4K, compared to mid-40s, with everything maxed but RT and DLSS disabled. In short, to get to 60FPS, we would need to reduce quality settings even further, meaning not only would we be missing Ray Tracing, but also need to lower traditional rendering quality too from the Ultra settings (again, consoles use a mixture of custom medium to high settings, and target 30FPS).

Control is very much the same story, with full hardware-based ray tracing pounding the in-game frame rate, but DLSS, again, saving the day.

This isn’t going to be the end of upsampling and non-native resolutions either. Microsoft’s teams are experimenting with having game engines ship with low-resolution textures, but then using AI to upsample them in real-time to the near indistinguishable to native 4K textures. Game install sizes are ballooning rapidly, this is going to be extremely important in both console games and PC, with a plethora of benefits, including reducing the actual size games take up on disk, reducing the amount of data needing to be transferred around the system and in general improving the experience for everyone.

Reconstruction and image upsampling technology may not be ‘new’, but the research into it has never been as strong as it is now. No matter how powerful Sony, Microsoft or GPU vendors create hardware, there’s a finite amount of resources that the hardware has and smart usage of the available performance and upsampling it to save budget just makes sense.

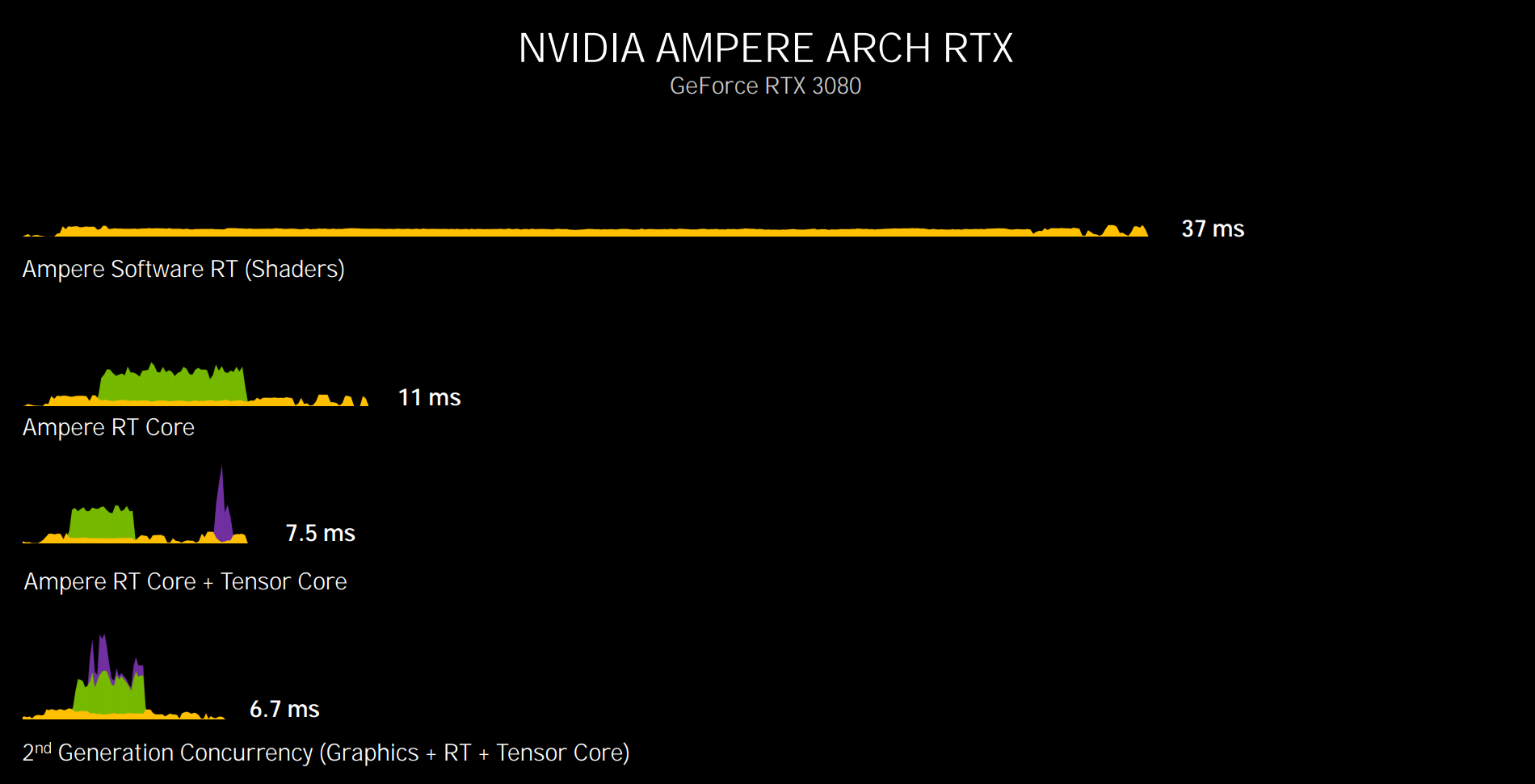

DLSS from Nvidia uses an 8 bit operation on the Tensor Cores of the GPU, below you can see a single frame, with Nvidia’s Shaders (at top) handles Ray Tracing work along with traditional rasterization. The next few images show off how as RT cores are leveraged from the architecture, and Tensor Cores for upsampling, massive performance savings come into play, taking the time to render a frame from 37ms (so under 30FPS) to just 6.7ms, which more than enough to be over 120FPS (120Hz is about 8.3ms).

RDNA 2 though leverages AMD’s next-generation architecture, where 8-bit operations are run on the CU as lower precision operations, and basically mimics large what Tensor Cores are capable of. This does take away performance from the CU (which are responsible for other game code), but the performance speed up should still be tremendous compared to running the game at a higher resolution.

RDNA 2 also has it’s own Ray Tracing cores too, albeit they’re a little different to what is found in Nvidia’s Ampere architecture in their functionality. We’ll discuss this further in the future though.

Consoles have been playing around with image reconstruction and upsampling for some time too, and while Sony’s Playstation 4 Pro added in hardware support for checkerboard rendering, even Killzone Shadow Fall (a launch PS4 game) used a type of image reconstruction technology. Although different than what we’re discussing here, Shadow Fall used Temporal Reprojection for its image reconstruction.

This mode was for multiplayer, where rather than the full resolution image of 1920x1080P, a half-width resolution was used (960×1080) and then the current frame, along with the two previous frames were stored. and the image was reconstructed thanks to the previous history.

For me personally, I think that terms like native resolution have lost a lot of relevancy over the past few years, certainly, since the PS4 Pro and Xbox One X launched (with the PS4 Pro sporting dedicated hardware for checkboard rendering) and I suspect this is going to be a trend which continues as the generation progresses and game developers start to fully leverage the hardware of both consoles, and on the PC front too, the push from AMD from its FidelityFX Super Sampling and Nvidia’s continuing to smack DLSS on all of our heads is only ever positive.

Back when a multi-core and thread processors became commonplace to the market, we needed to evaluate the performance of machines differently than ever before, and now, and graphics technology continues to evolve. Hardware T&L, unified graphics pipelines, and now the rise of Ray Tracing and upsampling technology. Think of this as a new era for gaming, and great for technology across the board.