When the specifications to Microsoft’s Xbox One were first unveiled to the public, the console received a lot of criticism due to the perceived gap between it and its rival, Sony’s Playstation 4. It’s hard to argue the difference in specs hasn’t hurt the Xbox One’s sales figures, despite the rather nice bump the Kinect-less SKU has given. Several readers and viewers have requested I write a more detailed (basics) of what difference there are between the two GPU’s. Let’s spend a bit of time looking at the hardware (specifically, focused on the GPU’s) of both machines and seeing what all the fuss is really about.

Please keep in mind several important factors. The first is higher GPU performance doesn’t equal either better games, a better selling console or a better experience. It does however equal to more pixels on screen, or higher frame rates. With that said, if you’re looking to buy a console and all of your buddies own an Xbox One or you just prefer the look of Microsoft’s games, the X1 is the better buy.

Similarly, neither the PS4 or X1 can be judged solely on the merit of their respective GPU’s. A myriad of other factors including skill of the programming team, the rest of the hardware, API’s and the application that’s being run ultimately make a huge difference. An indie game with a retro 8-bit style will run at 60 FPS and 1080P on both for example.

Xbox One and Playstation 4 Basic GPU Specs

AMD power the hearts of both the X1 and Ps4, as they supply the custom APU (which is co-designed with Microsoft or Sony, respectively). The APU features a slew of components, including the CPU, Move Engines, Audio Processors and the main focus of this article – the GPU’s. The GPU’s for both are based on AMD’s GCN (Graphic Core Next) technology. Both machines however have slight difference in their GPU architecture, with the X1 being based on Bonaire and the PS4 Pitcairn.

The GCN architecture is powerful and like all modern GPU’s features programmable shaders which can be assigned to a variety of different tasks, be it compute (say calculating physics) or drawing (say drawing a blade of grass).

The Xbox One features 768 shaders, while the Playstation 4 GPU contains 1152. These are split up into CU (Compute Units), each housing 64 Stream Processors (to give the shaders AMD’s official name) each. Both consoles do have 2 additional CU’s (meaning 14 for the Xbox One, and 20 for the Playstation 4) but these are disabled to provide fault tolerance due to yields. In other words, out of the twenty only eighteen need to function, meaning less wasted silicone.

For other factors, the Xbox One features 16 ROPS (Raster Operators) which are responsible of effectively piecing together a scene and adding post processing / Anti-Aliasing. The PS4 features 32 ROPS, double the amount. This doesn’t ‘double’ the PS4’s performance, in theory 1080P can easily be handled by 16 ROPS, but if a game does happen to feature heavy levels of Anti-Aliasing (AA), Texture Filtering and the like then the additional ROPS can be invaluable.

The X1 also features fewer Texture Mapping Units, 48 versus the Playstation 4’s 72 TMU’s. The additional TMU’s mean a higher level of texture fill rate, a nice advantage for the PS4’s GPU spec. It’s calculation is also fairly simple – the GPU’s clock speed is multiplied by the number of TMU’s.

Since we know the clock speeds of both GPU’s, we can easily verify the performance numbers both Microsoft and Sony have given us regarding their peak performance. For the Playstation 4 – the GPU runs at 800 MHZ, so you do the following: 800 MHZ multiply by 1152 by 2. This gives you 1.84 TFLOPS. For the Xbox One, using a similar formula we arrive at the peak of 1.32 TFLOPS. In case you’re wondering, you multiply by two because the GCN architecture handles two instructions per clock.

Why GPU Computing is Important For Consoles

There are a few reasons compute is now a ‘big deal’ with this generation of consoles. The first has little to do with the platforms themselves, but everything to do with how games and hardware operate. Think of the CPU as a general purpose workhorse, able to accept a wide variety of different commands and process them intelligently. To do so, it has fantastic decision making potential, allowing it to handle complex program control structures. In a nutshell, this means the CPU is “smarter” and able to handle a very wide variety of tasks. The downside is this takes a lot of room up on the CPU’s die. Certain programs or functions don’t require lots of smart decisions, instead they focus on raw performance.

The GPU features lots of cores (shaders), but the GPU itself isn’t that smart and requires the CPU to issue it commands. Originally, Unified Shaders were created because not all parts in the graphics pipeline are equal – in other words, the fixed functions of the earlier GPU’s had the inherent limitation of the GPU creator having to ‘guess’ which way game development would go. Because all game engines and games are designed differently, you would often get times were either the dedicated Vertex or Pixel Shaders were doing very little. Therefore the decision to unify the shaders into multiple flexible processors was a logical one.

Additionally, neither the PS4 or X1 feature a particularly beefy CPU. Both feature AMD’s Jaguar, which is a low cost, low power CPU. Both consoles have a custom version, and both are in-fact very similar. They’re both eight CPU cores, each handling one hardware thread each. These X86-64 CPU cores just aren’t capable of throwing their weight around too much, and are ‘quite slow’ compared to desktop CPU’s according to developers.

Playstation 4 vs Xbox One GPU Compute

The PS4 features three advantages over the X1’s GPU specs when it comes to GPGPU performance. The first is one we’ve already covered – the raw performance of the GPU. The higher raw FLOP count of the PS4 means more data can be processed at once, which does lead to an obvious advantage to the PS4.

The second area is the so called volatile bit, which was implemented on the consoles Level 2 cache. It was first mentioned by the consoles lead architect, Mark Cerny and the basic idea is that compute data is effectively ‘tagged’ in the cache. Because compute data regularly needs to be flushed or updated this leads to a boost in performance as they can selectively do so a single line at a time. The Xbox One’s GPU doesn’t feature this ability, or a similar one as far as we know.

Finally, and the big advantage are the additional ACE’s inside the PS4’s GPU. ACE – Asynchronous Compute Units, are responsible for telling scheduling when data should be processed by the shaders. The Xbox One features two, while the Playstation 4 features eight ACE’s. These, at least theoretically, improve the scheduling of when GPGPU can run on the PS4’s graphics processor compared to the Xbox One.

Each ACE holds eight queues, providing the Xbox One a total of 16, while the Playstation 4 can hold 64. This allows the GPU to schedule the GPGPU commands at times where there’s less ‘stuff going on’ – but it’s important to remember that while this can be handled automatically by the PS4 or X1, developers do have the option to run this themselves. More info is available in Our Sucker Punch Post Mortem Analysis of Infamous Second Son.

| Console Specs | Xbox One | Playstation 4 |

| CPU Type | AMD Jaguar X86-64 | AMD Jaguar X86-64 |

| CPU Clock Speed | 1.75 GHZ | 1.6 GHZ (estimation – Sony won’t confirm) |

| CPU Cores / Thread Count | 8 CPU cores, 1 thread each – 8 threads total | 8 CPU cores, 1 thread each – 8 threads total |

| GPU Cores and Clock Speed | 768 Shaders From 12 Compute Units (853 MHZ) | 1152 Shaders From 18 Compute Units (800MHZ) |

| System Memory | 8GB DDR3 2133MHZ | 8GB GDDR5 5500MHZ |

| Memory Bandwidth | 68.3 GB/S for 8GB of DDR3 and a further 204GB/S (PEAK) for the 32MB eSRAM | 176GB/s Unified Memory |

| Peak Shader Power | 1.32 TFLOPS | 1.84 TFLOPS |

Next Generation Memory Bandwidth & ESRAM

While the major focus of this article is the GPU’s of both machines, there needs to be at least a brief discussion on the memory and memory bandwidth of both systems. Though fairly common knowledge, so we’re all on the same page both machines utilize a 256-bit memory bus, but the difference in memory bandwidth (68GB/s vs 176GB/s) comes down to the type of memory. The PS4 uses GDDR5 while Microsoft opted to use DDR3 for the X1. Despite arguments regarding the latency of GDDR5 having a bad effect on the CPU of the PS4, in our analysis we’ve shown the difference is very little and likely won’t negatively impact the system.

The Xbox One does have the rather infamous eSRAM to try and make up the gap in memory bandwidth, and it’s fairly flexible. It can be used for storage on a massive variety of data, from render targets to textures and compute data. It’s also exclusive to the GPU, so the CPU (for instance) doesn’t get a look in. The problem isn’t it’s speed or exclusivity, but the fact its 32MB of RAM are fairly constraining. A few render targets, a few assets and the eSRAM is bulging at the seems. It’s not impossible to work around, despite what critics say – developers are understanding it better. But it’s adding another level of complexity to the Xbox One which frankly, the PS4 is better off not having. For more info on this, check out Xbox One dev day breakdown.

Of course, the total amount of RAM available on both consoles is another consideration. All the memory bandwidth in the world doesn’t help if the system has 1GB for developers to work with. Both the PS4 and X1 have 8GB total, but they both allocate a rather large amount for OS functions. It’s not known how much is reserved for the Xbox One precisely has. What we do know is that the Playstation 4 has 4.5GB available memory, with 512MB additional RAM as flexible memory, and virtual memory too. For more info check out our Infamous Analysis.

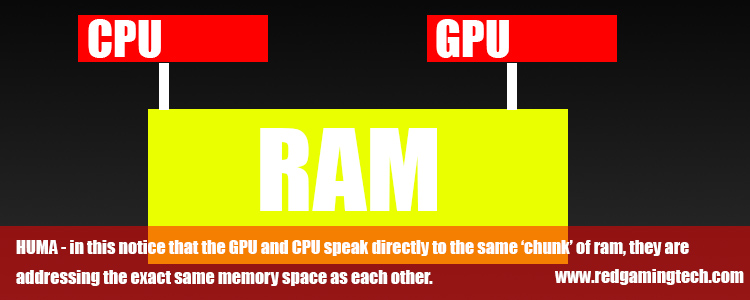

One area the PS4 does have an advantage with is the HUMA design is a little more advanced that the Xbox One’s. For more info on the Playstation 4’s HUMA click.

DirectX12 vs GNM – the API Factor

DX12’s impact on the Xbox One is up for debate, with some heralding it as the Xbox One’s messiah and others quickly keen to sweep it under the rug. In reality, we’ll probably have a situation that’s in between the two. The Xbox One’s API is already fairly low level, with Microsoft pointing out there are extensions to “take you to the metal” (which is such a trendy phrase these days). The Xbox One’s DX11.2 actually has a few DX12 features already (see the dev day link above for more info on that).

The GNMX and GNM API’s from Sony are proving to be extremely popular and easy to use by developers. GNMX is a simplified version of the API, hiding many of the custom features behind a “high level language” – in effect, it makes it user friendly and easy to develop for. GNM is low level, and allows developers to better control each and every aspect of the GPU.

It’s hard to know which API or SDK is better. Early on Sony’s software ran rings around Microsoft’s, but recent improvements to the SDK’s have left the Xbox One in a much better position. Of course the June 2014 SDK update has also beefed up the amount of GPU performance available to developers. Previously 10 percent was cordoned off to allow Kinect to do its thing, where as now developers can use that to move pixels around on screen instead.

One advantage that’s hard to argue with is porting PC code over to Xbox One will certainly become a lot easier – it’ll mean of course work to optimize the game for the systems memory configuration, and lower GPU performance (compared to a PC) but certainly a nice feature.

The Verdict

The Playstation 4’s GPU is faster and has a number of little tricks up its sleeve which help it stay on top. Ultimately though, making use of the hardware really comes down to the developer in question. In the case of indie games for example, the additional GPU performance won’t really help. The games aren’t graphically (or GPGPU compute intensive) enough on consoles CPU or graphics cards to make the difference. Although there are lots of websites which are quoting developers who’re boasting at 1080P / 60FPS on their games, it’s not a very good yard stick.

The PS4’s GPU can push a greater amount of pixels (or push the same number of pixels but faster), but the real advantage will likely come from the Playstation 4’s first party developers. Bungee and other devs usually like to use the parity card, and even during our own testing of say Ubisoft’s Watch Dogs neither the graphics or the frame rates were that much different. Slightly nicer looking shadows here or there, but nothing to write home back. With that said, Sniper Elite 3 is graphically superior on the PS4 – but mostly because of the better AF and higher frame rate.

Taking a look at our explanation on how frame rate and resolutions work you’ll see that simply going from 720P to 792P requires the GPU to do about 1.21 times more work. Meanwhile, 900P to 1080P is 1.44 times more work… or, around the difference between the Xbox One and the Playstation 4’s GPU specs.

Boiling all of this down to the basics – don’t buy a console based purely on how fast a GPU is. Do buy a console based on the platform exclusives, which offers you the better experience and also perhaps which one your friends own. If you’re judging the systems purely on their ability to ‘look the best’ then really, you’d be better off with a gaming PC.

As we regularly put together PC component buyers guides, we’ve shown that for around the $700 mark you can build a very powerful gaming PC, complete with a GTX 770 (easily capable of playing at 1440P in most games). Even a mid range GPU is over double the performance of a next generation console. My point being simple – buy a console for its unique features first and foremost, worry about the shiny graphics second.